Tech

Superb AI “Proprietary AI Foundation Model Project” Phase 2: Key Takeaways on Digital Twin Assetization

Hyun Kim

Co-Founder & CEO | 2026/02/23 | 7 min read

The battleground for Physical AI is becoming increasingly clear: simulation data. Relying solely on filming and validating every required scenario in the real world is no longer sustainable in terms of time and cost.

NVIDIA recently announced DreamDojo, an open-source interactive world model, and emphasized that it trained a robot world model using human first-person (egocentric) video. This signals that the approach Superb AI adopted early on is aligned with the latest global technology trends.

In Phase 1 of Superb AI’s Proprietary AI Foundation Model Project (hereafter “the Project”), we secured 1.08 million RGB-D frames across 50 Korea-style residential environments. Of those, 300,000 frames were “assetized” into a format usable for training and validation—laying the groundwork to break through the “real-world data bottleneck.” In other words, the egocentric real-world footage collected in Phase 1 has been proven to be a strategic advantage for Superb AI in the race to build world models.

Rather than a phase focused on “collecting more frames,” Phase 2 is an R&D-driven “assetization” stage—using the physical data already secured as raw material to build a robot-trainable virtual physical environment (a digital twin). The goal is to construct three core digital assets—Space, Action, and Object—and use them to build the foundation for robot intelligence.

TL;DR — Key Highlights of Superb AI’s Phase 2

- Phase 2 is defined as an R&D stage focused on creating “materials” for simulation environments.

- Based on the raw data secured in Phase 1, we will build three core assets that simulators can understand: Space, Action, and Object.

- We will integrate these assets into simulation environments to validate a synthetic data generation pipeline—including edge cases.

Turning Real-World Data Into Digital Assets

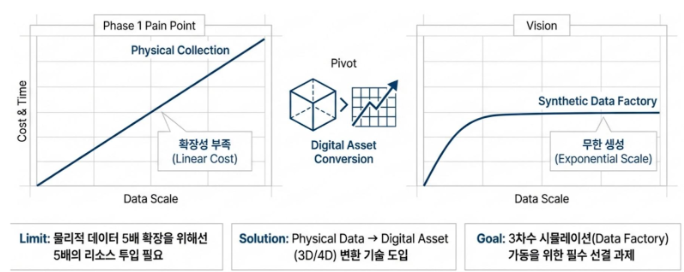

In Phase 1 of the Project, Superb AI secured high-resolution RGB-D data across 50 Korea-style residential environments and processed it into a trainable format—building the foundational infrastructure for Physical AI. However, continuously expanding real-world data collection to improve AI performance further has clear limits. Rebuilding locations, actors, and staging for every new shoot is expensive, and capturing rare scenarios (edge cases) is not only practically impossible but can also pose serious safety risks. It is far from easy to stage unexpected situations—such as a cooking tool catching fire or smoke filling the space and obstructing visibility.

(Sample diagram explaining Superb AI’s Phase 2 strategy)

That is why the strategy for Phase 2 is clear. The core of Phase 2 is not simply collecting more data—it is converting real-world captured data into digital assets that can be used in simulation environments. In other words, instead of copying reality as-is, we structure real data into a form that simulators can understand—and manipulate.

In simulation environments, the domain randomization technology makes it possible to change lighting, weather, furniture layouts, and camera angles in seconds. This enables thousands—or even tens of thousands—of derived variations from a single source dataset. It is a critical prerequisite for operating a true “data factory” that breaks physical constraints and exponentially expands both the diversity and scale of data needed for robot learning.

2. Core Task: Building Three Digital Asset Pillars for Simulation

For meaningful learning to happen in a simulator, you need more than just images. The environment (space), actions, and objects must all be prepared in the form of “assets.”

That is why Phase 2 centers on building the following three pillars:

- Space assets: A 3D environment where robots can move (including physical properties)

- Action assets: Human-performed actions (including body shape and posture)

- Object assets: Interactive objects that robots can manipulate

When these three assets come together, the simulator can generate diverse variation scenarios that do not exist in the real world—such as changes in lighting, layout, movement paths, object states, and rare edge cases.

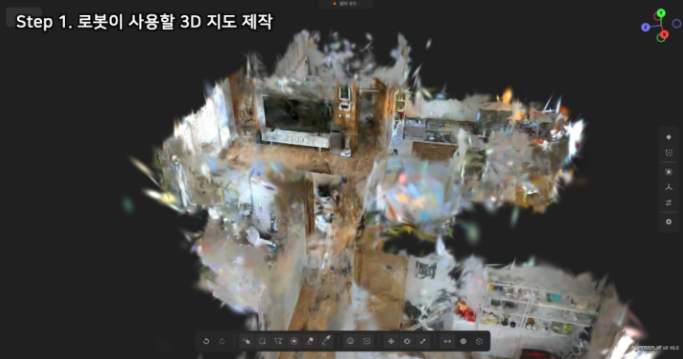

2.1 High-Fidelity 3D Space Assets

Goal: Fully reconstruct 50 Korea-style residential environments as 3D spaces that support physical interaction.

Technical approach: NeRF and 3D Gaussian Splatting

Traditional 3D scanning often struggles with blurred textures and fails to represent transparent objects such as windows and mirrors. To prevent robots from misinterpreting a glass window as open space—and colliding into it—photoreal visual alignment is essential.

To achieve this, Superb AI is adopting 3D Gaussian Splatting.

- How it works: The method represents an environment as millions of 3D Gaussian (ellipsoidal) particles, reconstructing real-world spaces in photoreal, high-resolution detail. It preserves the high-quality rendering strengths of NeRF (Neural Radiance Fields) while delivering far faster rendering speed—making it well-suited for real-time simulation environments.

- Assigning physical properties: We go beyond visual reconstruction. Using a separate AI model, we analyze structural information such as floors and walls, then assign physics-engine-readable properties—such as floor friction and wall rigidity—based on that structure. This enables robots to drive through the virtual space and run collision tests.

(Space asset example)

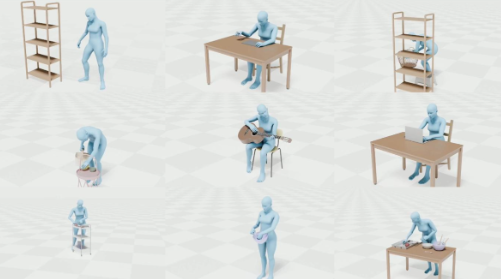

2.2 Dynamic Action Assets

Goal: Convert 5,000 household task scenarios—such as dishwashing and cleaning—into motion data robots can learn from.

Technical approach: SMPL (Skinned Multi-Person Linear Model)

Pixel-level motion in 2D video alone cannot teach a robot “how to extend its arm.” For that, human movement must be extracted as joint-level mathematical data.

- SMPL-based motion capture: Superb AI uses an SMPL model that separates and parameterizes a person’s body shape and pose from video. Without attaching physical markers, it can estimate joint rotations and body deformations in 3D space using video analysis alone.

- Motion retargeting: The extracted motion is assetized in the SMPL (Skinned Multi-Person Linear) format. It can be applied to digital humans of different body types inside a simulator and serves as a core training resource for humanoid robots to learn by imitation. To apply it to a robot, an additional “retargeting” process is required to convert motion data to match the robot’s specific joint structure.

(Action asset examples)

2.3 Intelligent Object Assets

Goal: Assetize 10,000 objects commonly used in household settings into interactive, manipulable objects.

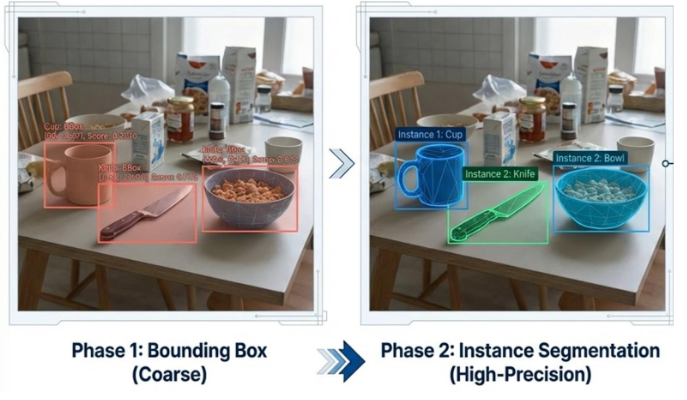

Technical approach: High-precision segmentation and articulated joint modeling

If a microwave in simulation is merely “a box with a microwave picture on it,” a robot cannot practice opening the door. The core of object assetization is interactivity.

- Object separation: Using technologies such as SAM (Segment Anything Model), we segment objects at the pixel level with high precision. Our target is an IoU (Intersection over Union) of 0.7 or higher, even in cluttered scenes with overlapping objects or complex backgrounds.

- Articulated joint modeling: For objects with moving parts—such as drawers, refrigerator doors, and scissors—we define hinge information and motion ranges. This enables robots to train manipulation skills in virtual space, such as pulling out a drawer or closing scissors.

(Object assetization examples for household items)

3. Technical Methodology: Turning On the Synthetic Data Factory

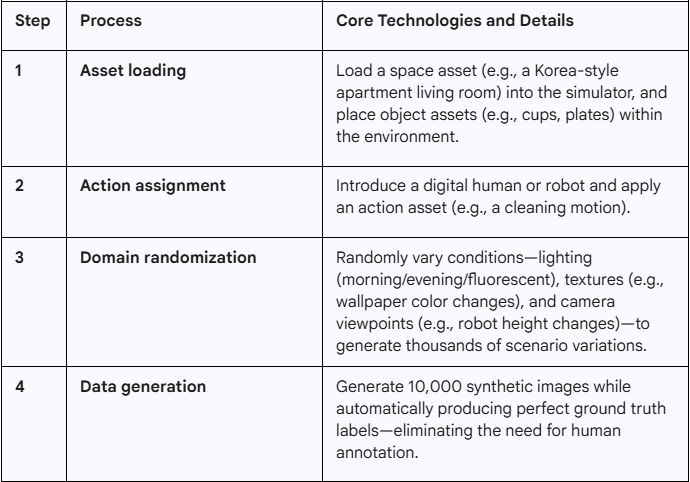

The three asset pillars are integrated through a pipeline built on NVIDIA Isaac Sim—where synthetic data is produced at scale. This is why Phase 2 is defined not as simple data processing, but as a technical R&D effort.

3.1 Synthetic Data Generation Pipeline

3.2 Closing the Sim-to-Real Gap

The biggest challenge of synthetic data is the Sim-to-Real Gap: “Will a robot trained in simulation perform reliably in the real world?” To validate this, Superb AI uses the SSIM (Structural Similarity Index Measure) metric to verify visual alignment between synthetic and real-world images. We also establish strict quality standards—keeping physics-engine collision errors within 10%.

4. Conclusion: Laying the Foundation for Korea’s Robotics Ecosystem—and Leading World Models

The goal of Superb AI’s Phase 2 Project is not “data for our use only.” Through this work, we aim to reduce the time and cost constraints of Physical AI data acquisition and help energize Korea’s robotics and AI ecosystem by opening high-quality 3D and 4D datasets.

We will also integrate assetized Space, Action, and Object into simulation and implement a step-by-step Sim-to-Real framework that reflects even edge cases.

Just as NVIDIA opened a new frontier with robot world models trained on first-person data, Superb AI is strengthening the foundation for building a general-purpose world model—leveraging uniquely high-quality egocentric raw data and the assetization of three core digital pillars. Once this structure is complete, robots will no longer learn by filming more homes. They will learn by generating more situations.

From that point on, Physical AI will no longer be technology that follows reality—it will become technology that prepares for reality ahead of time. Superb AI will successfully complete Phase 2 of the Project, drive qualitative innovation beyond mere data volume growth, and contribute to Korea’s leap toward becoming a global first mover in Physical AI and world models.

Related Posts

Tech

Building Superb AI’s Synthetic Data Pipeline with NVIDIA Isaac Sim

Hyun Kim

Co-Founder & CEO | 7 min read

Tech

Superb AI Achieves Phase 1 Milestone in the State-Run Proprietary AI Foundation Model Project (1.08M Robot Data)

Hyun Kim

Co-Founder & CEO | 10 min read

Tech

Download our New Technical White Paper for Free: Vision AI Success Guide – Building a Technical Moat with Data-Centric MLOps

Hyun Kim

Co-Founder & CEO | 3 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.