Tech

Building Superb AI’s Synthetic Data Pipeline with NVIDIA Isaac Sim

Hyun Kim

Co-Founder & CEO | 2026/03/09 | 7 min read

Superb AI is building a Multi-Target Multi-Camera (MTMC) 3D tracking system designed for large-scale environments. The goal is simple in concept but extremely challenging in practice:track multiple objects across dozens of cameras and reconstruct their movements across a shared 3D space.

This capability unlocks powerful applications. By understanding how people move through complex environments, organizations can measure crowd density, analyze spatial efficiency, and optimize operations in large venues such as airports, factories, and public infrastructure.

You can see one example of this technology applied at Incheon International Airport here:

But building MTMC systems at scale requires one thing above all else: data.

Training these models requires massive volumes of multi-camera video datasets with precise labels for every target. In real-world environments, collecting this data is extremely difficult. The labeling workload and cost scale with:

- the number of objects

- the number of frames

- the number of camera views

To overcome this challenge, the Superb AI 3D Team turned to synthetic data.

Using the NVIDIA Isaac Sim ecosystem, the team built a synthetic data generation pipeline capable of producing large-scale training datasets for multi-camera tracking models.

In this article—based on an internal talk by engineer Song Chanyoung—we walk through the key technical challenges the Superb AI 3D Team encountered while building this pipeline, and the engineering solutions developed to solve them.

Bridging the Sim-to-Real Gap

Synthetic data offers a powerful advantage: it enables the automatic generation of perfect ground-truth labels, including camera calibration data, bounding boxes, depth maps, and semantic labels.

However, successfully deploying models trained in simulation to real-world environments introduces a number of challenges. These include acquiring realistic synthetic assets, implementing scalable domain randomization, and simulating believable character behavior and crowd dynamics. Addressing these issues is essential for reducing the Sim-to-Real gap.

Drawing on deep expertise in simulation engines and frameworks, the Superb AI 3D Team has developed several engineering solutions to address these challenges.

1. Solving 3D Gaussian Splatting Rendering Conflicts with a Two-Pass Workaround

To reconstruct real-world environments inside the simulator with photorealistic visual fidelity, the team employs 3D Gaussian Splatting (3DGS) technology.

For physical interaction within the simulation environment, mesh primitives are connected to splatting data through proxy primitives (Proxy Prims). In the Isaac Sim and USD environments, proxy prims and matte objects are commonly used to simplify physical computations and occlusion handling without relying on complex original assets.

However, when this property was applied to incomplete meshes, the team encountered a fundamental conflict between rendering quality and labeling accuracy.

- If the mesh was set to invisible, rendering results remained clean and visually correct. However, the annotator could no longer recognize physical occlusion. As a result, characters positioned behind walls were incorrectly labeled as if they were visible.

- On the other hand, when the mesh was set to visible, the annotator behaved correctly—but rendering artifacts appeared in mesh regions, introducing unwanted shadows and distorted lighting.

(Example of resolving the rendering issue in Gaussian Splatting)

To overcome this framework limitation, the Superb AI 3D Team devised a two-pass rendering workaround. In the first pass, meshes are included so the annotator can compute accurate physical occlusion. In the second pass, meshes are excluded, allowing the simulator to produce clean and photorealistic 3DGS rendering. This approach effectively decouples rendering quality from labeling accuracy, ensuring both can be optimized independently.

2. Developing a Custom Annotator Inspired by Human Perception

Isaac Sim’s synthetic data framework, Omniverse Replicator, provides built-in auto-annotation capabilities. However, the default annotator has a limitation: even if an object is almost completely occluded and only a few pixels are visible to the camera, it still generates a large bounding box that includes the entire object.

A human annotator would typically behave differently. If only a tiny portion of the object were visible, it would likely be ignored as noise—or labeled with a much tighter bounding box.

To improve data quality, Superb AI extended the Replicator pipeline by designing a custom annotator that better reflects human perception.

The new algorithm operates as follows:

- First, it uses the system’s semantic segmentation labels to extract only the character pixels that are actually visible in the frame.

- Next, it computes the connected contours of these extracted pixels.

- Contours with areas below a predefined threshold parameter are treated as noise and ignored entirely during bounding box generation.

Through this refined pixel filtering logic, the pipeline can generate large volumes of high-quality bounding box data that closely resemble human annotation—making the data significantly more effective for AI model training.

3. Script-Driven Domain Randomization at Scale

The key to closing the gap between simulation and reality is exposing models to an enormous variety of training scenarios.

To achieve this, the Superb AI 3D Team developed a script-based domain randomization pipeline using Python. This system allows environmental variables to be programmatically controlled and randomized across simulation runs.

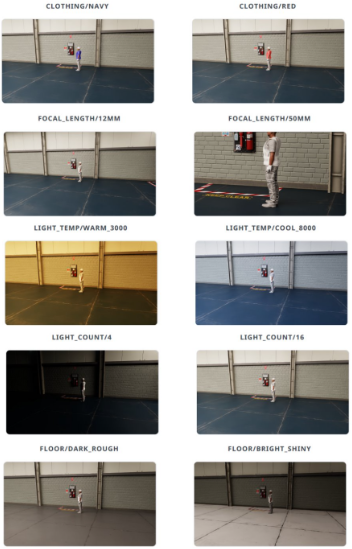

For example, the pipeline can randomly vary the following visual and physical parameters:

Lighting

- Color temperature of light sources

- Number of lights in the scene

- Random sampling of lighting intensity

Camera properties

- Lens parameters such as focal length

Object and environmental materials

- Character clothing colors

- Floor materials and reflectivity

(Example of randomized synthetic data variations)

By iterating through the USD Stage and automatically applying randomized property settings across the scene, the pipeline can generate a massive variety of visual conditions—even from a single base scene. This automated process enables the team to efficiently create diverse, large-scale datasets that significantly improve the generalization performance of AI models.

Pushing the Limits of Simulation-Based Data Generation

The Superb AI 3D Team is not simply relying on out-of-the-box simulator capabilities. Instead, the team deeply analyzes the technical architecture of the NVIDIA simulation ecosystem and pushes beyond its limitations through custom algorithms and engineering innovations.

If you're interested in building high-quality synthetic data pipelines for the era of Physical AI, feel free to connect with the Superb AI team.

Related Posts

Tech

Superb AI “Proprietary AI Foundation Model Project” Phase 2: Key Takeaways on Digital Twin Assetization

Hyun Kim

Co-Founder & CEO | 7 min read

Tech

Superb AI Achieves Phase 1 Milestone in the State-Run Proprietary AI Foundation Model Project (1.08M Robot Data)

Hyun Kim

Co-Founder & CEO | 10 min read

Tech

Download our New Technical White Paper for Free: Vision AI Success Guide – Building a Technical Moat with Data-Centric MLOps

Hyun Kim

Co-Founder & CEO | 3 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.