Insight

How To Build Ideal Review and QA Workflows for Computer Vision Data Labeling

Hanan Othman

Content Writer | 2022/11/30 | 5 min read

When a model doesn't function as expected or performs poorly, odds are it's an issue that links back to quality, the end-all be-all of what makes ML applications tick the right way, the level of dedication and care given to maintaining high quality standards throughout the entire development lifecycle.

To ensure quality is prioritized and reduce the probability of model performance concerns, especially in later phases of development, any ML team should establish and follow a thorough QA and review workflow early on for their build project.

Although the best QA measures to employ can vary from project to project and their initiatives, there are common standards that can be adapted and easily implemented for most if not all ML applications.

Read on to learn about the components of an ideal and efficient QA workflow as well as how to incorporate them into development processes to help raise model performance and ensure they function consistently outside their training environment.

How to Measure Annotation Quality

Before discussing which QA measures to employ, it's important to first understand what qualifies as properly assessing model performance and what actually goes into an "ideal" degree of capability. For any competent AI system infrastructure, that means being aware of the quantity and quality of data required as well as the quality of the annotations performed on those datasets.

The first step in assessing quality starts at the data acquisition stage, the initial point of the data preprocessing pipeline; with the goal that the data collected is both relevant and can serve as a reliable base for labels to represent a ground truth degree of accuracy on.

The most important assessor of all is how precise annotations are to specific data points according to the labeling technique used; from bounding boxes to keypoint, the overall accuracy of these annotations can be considered equivalent to the resulting performance quality.

To assess how correct or error-prone these annotations are, several QA methods are used like benchmarking, the consensus algorithm and reviews by subject matter experts (SMEs).

Consensus Algorithm

The consensus algorithm is a method to determine the consistency of datasets and is regarded as the number of annotators that agree or disagree with the accuracy of the labels. By striving to achieve consistency, ML teams help to prevent issues like noise or bias. However, since consistency is unreliable as a measurement for assessing quality on its own - methods that focus on and determine accuracy come in handy.

Benchmarks

Benchmarks help with measuring accuracy by enabling annotators to monitor the quality of data, specifically, how precise labels or annotations are to ground truth, the sample set of training data that is used to gauge how accurate annotators are.

Benchmarks not only help with monitoring accuracy and contribute to significantly improving quality in that way, but to identify and hone in on quality losses or fluctuations by monitoring labeling task proficiency and how to improve those specific preprocessing work cycles.

SME Reviews

Following the annotation phase and once labels are applied to datasets, one or more SMEs would act as invaluable assets through the review method and taking on the role of reviewers for preprocessed datasets.

SMEs manually check the accuracy of a portion of the labels, to recognize inconsistencies and areas of improvement to boost accuracy in the labeling process. Unlike benchmarks, reviews focus on the accuracy of the actual annotations rather than individual labelers and resolving those issues for a labeling team based on their members.

Choosing an Assessment Method

Although benchmarks are the most affordable method to asses data quality, it only deals with or "assesses'' a small subset of the training data. Making the other two methods appealing only when considering associated costs depending on the project data volume needs and level of review required.

The Ideal Annotation Review and QA Workflow

In an idealistic scenario, quality measures are integrated into every step of the CV model development cycle; from data tests, code tests, and model tests, and those measures would also be automated to the greatest degree possible, streamlining the entire process from end-to-end and reducing the likelihood of error due to human-in-the-loop (HITL) involvement.

It's as good as public knowledge for data scientists and ML engineers that model work is preferred over data work, but in reality, quality plays an integral part in easing model build struggles, saving time and effort through repetitive testing iterations. Which only makes it in any ML team's best interests to follow a clear Data QA strategy, one that targets the most problematic data quality issues during validation and testing.

QA Process Phases

The typical QA task order or sequence for any CV model project is cyclical; acquiring data, organizing, cleaning, annotating, and finally, once it reaches the QA phase, it might be sent back to be re-annotated or approved and continue on to be used for training.

When breaking down these tasks into smaller QA efforts, the following should be performed.

- Auditing annotations.

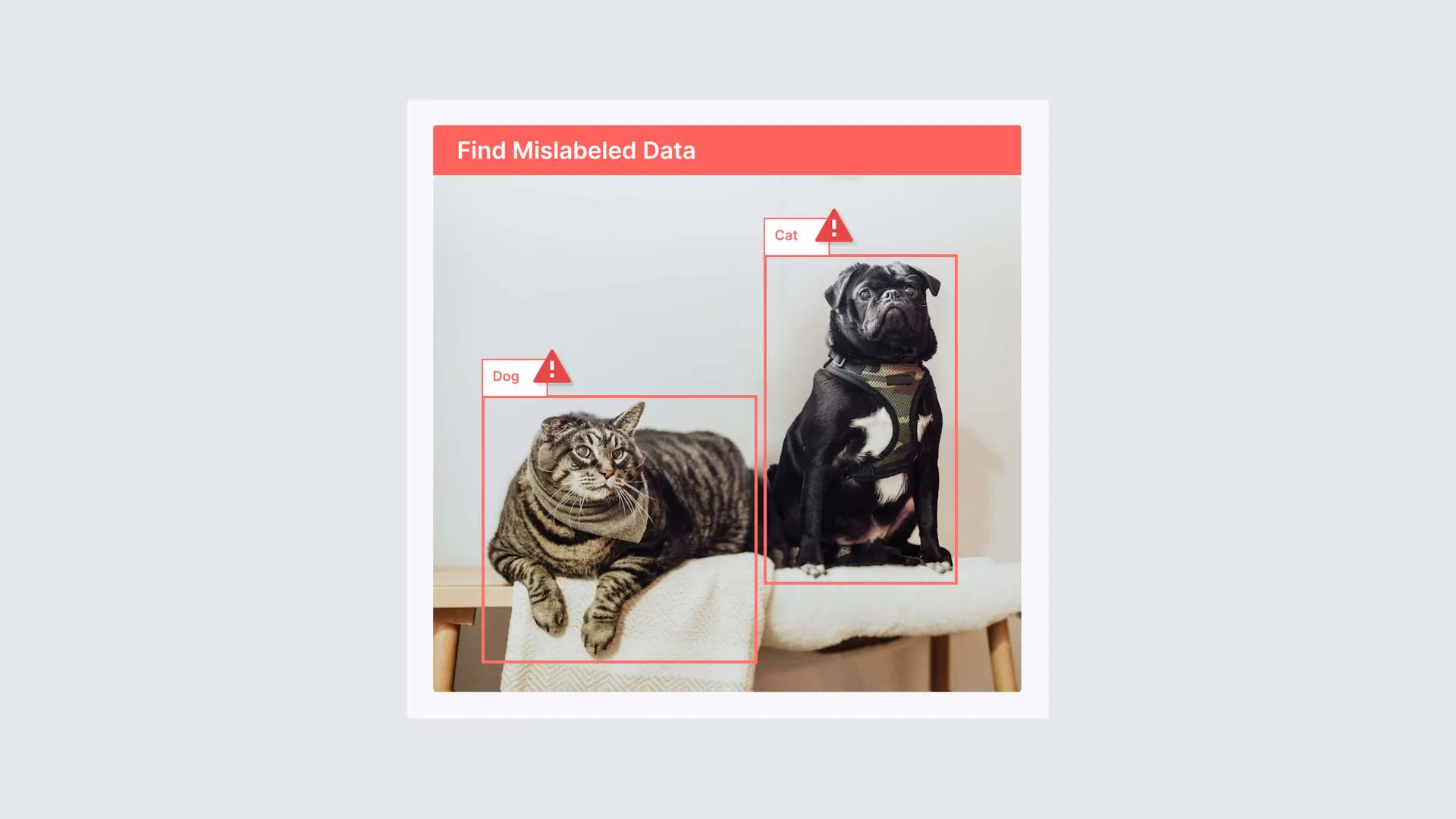

- Reviewing any flagged labels.

- Conducting random QA checks.

Auditing Annotations

Auditing labels in a broad sense, involves verifying the accuracy of labels and making any changes that are required to help them meet a golden or ground "truth" standard of accuracy.

Data labeling teams usually would assign certain members to audit or review labels that have been completed, entrusting them with the ability to approve or reject labels based on a well-defined policy for what an accurate or inaccurate label might look like.

Reviewing Flagged Labels

When an issue comes to light that's specific to one label over another, or even several labels, a proper QA process would anticipate those instances and isolate them with the intention of resolving those issues later or down the labeling pipeline at a later stage of the QA process by a team lead or qualified reviewer.

Having a plan in place for how to separate labels that are potentially flawed or count as an anonyme that annotators might feel uncertain working on; helps to enhance the QA process and aids in fulfilling validation because it nips these errors or uncertainties early on in development, long before it has a chance to impact a model in production.

Random Quality Checks

To get a general idea of how accurate a dataset is, it's worth the consideration of conducting random data quality checks and comparing them to the predefined criteria of what qualifies as accurate labeling as it relates to the project scope and data annotation requirements.

Performing a random check can be done by testing or analyzing a batch of annotations that have been submitted and already approved, or at the individual annotator level. Meaning that the work of certain annotators over others is randomly examined and tested to ensure their quality level and consistency remains high.

An Accurate and Consistent QA Labeling Cycle

Regardless of whether ML specialists are working on the next cutting edge AI application or getting a start-up concept off the ground, no model build can succeed without high quality data. Being able to achieve a higher standard of quality for a project is that much easier if issues are addressed early on and adhere to an established precedent of performance.

Because the amount of effort required for testing and validating ML models is intricately linked to the level of attention and care put into a project's QA efforts. It's tempting to solely focus on evaluating performance and stay locked into monitoring and adjusting model outcomes and predictions.

However, the root of any incorrect outcome can be tracked right back to whether the data was consistent and accurate during the labeling stage. Impact model performance meaningfully by addressing the heart of unwanted behaviors in data by. Set training data up to succeed and lead to an ideal level of performance, born from an ideal approach to assessing and curating the best quality datasets possible.

Related Posts

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

Insight

⑧ The Breakthrough for Physical AI? Robotics Training Reimagined by NVIDIA Cosmos

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑦ Why Synthetic Data Is the Key to Training Physical AI

Kye-Hyeon (KH) Kim

Chief Research Officer | 5 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.