Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 2026/04/01 | 7 min read

![[Physical AI Series 9] Strategy Breakdown: NVIDIA vs. Google](https://cdn.sanity.io/images/31qskqlc/production/0fcfd1f85b0f1597ed38dd936a95c9aebbf57304-2000x1125.png?fit=max&auto=format)

1. A New Phase of the AI Revolution: The Era of Embodiment

The defining technology theme of Q3 2025 is Physical AI.

During this period, the Nasdaq surpassed 20,000 points, extending a powerful rally led by technology stocks. At the same time, the market reflected a more complex reality, with concerns over AI valuations and the so-called “AI bubble” that had been building since late 2024. In November 2025, NVIDIA’s market capitalization approached $5 trillion, while Google (Alphabet) shares rose past $300, signaling that capital markets were increasingly concentrating around companies with AI infrastructure and technologies capable of physical embodiment.

In Superb AI’s Physical AI series on major U.S. tech companies, we analyze the key physical AI technologies, strategic partnerships, and hardware launches announced over the past three months by leading American tech firms such as NVIDIA, Google (Alphabet), Amazon, Tesla, and Meta. The ecosystem is now moving beyond a simple chatbot race and entering an era defined by autonomous agents and humanoid robots operating in factories, logistics centers, and homes.

In Part 1, we examine the key developments from Q3 and Q4 2025 at NVIDIA, which is digitizing the laws of the physical world, and Google, which is bringing high-level reasoning capabilities to robotics.

In Part 2, we will analyze the strategies of Tesla and Amazon.

2. Controlling the Operating System of Reality: NVIDIA’s Dominance in Physical AI Infrastructure

In the second half of 2025, NVIDIA evolved beyond a GPU chipmaker into a full-scale platform company for simulating and controlling the physical world. Through its November 2025 earnings announcement and the CES 2025 keynote, CEO Jensen Huang made it clear that Physical AI would become the next major engine of AI growth and unveiled the software stack and models to support that vision.

2.1 NVIDIA Cosmos: A World Foundation Model With Physical Common Sense

At the center of NVIDIA’s strategy is the NVIDIA Cosmos World Foundation Model platform, unveiled in November 2025. If traditional language models learned the statistical probabilities of text, Cosmos learns physical laws, causality, and object permanence—effectively giving robots a form of physical common sense.

2.1.1 Technical Architecture and Differentiation

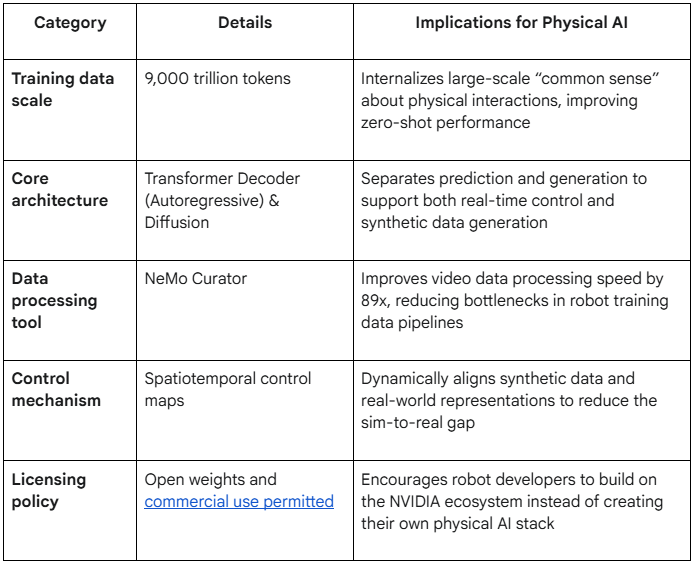

NVIDIA built the Cosmos models by training on approximately 9,000 trillion tokens of data. This allows robots to predict future states even in unfamiliar environments—for example, understanding that “if I push this cup, it will fall and break.” Cosmos consists of two core architectures.

- Cosmos Autoregressive Model:

- Function: Predicts future physical states based on current observations.

- Technical characteristics: Built on a transformer decoder architecture and incorporates 3D RoPE (Rotary Position Embeddings) to separately encode spatial and temporal dimensions. This is essential for predicting the trajectory of moving objects and simulating how a robot’s actions will affect its environment.

- Applications: Used in the decision-making processes of autonomous vehicles and robots for real-time path planning and collision avoidance.

2. Cosmos Diffusion Model:

- Function: Generates high-resolution, physically grounded video from text prompts or lightweight inputs.

- Technical characteristics: Combines video compression and text integration technologies, effectively enabling unlimited generation of synthetic data for robot training.

- Applications: Used to simulate dangerous or hard-to-capture real-world scenarios—such as fires or collision events—for training robots.

[Table 1] Core Specifications and Capabilities of the NVIDIA Cosmos World Foundation Model

By releasing these models under an open license, NVIDIA is encouraging robot developers worldwide to build on top of CUDA and Isaac. This can be interpreted as an effort to recreate, in the Physical AI era, the same kind of “platform lock-in” that Windows achieved in the PC era and Android achieved in the mobile era.

2.2 The “Mega” Omniverse Blueprint and the AI Superfactory

NVIDIA is not only focused on individual robots. In late October 2025, it introduced the Omniverse Blueprint, an architecture designed to make entire factories intelligent by applying digital twin technology across industrial environments.

- Expansion of industrial partnerships: Siemens, Foxconn, and Toyota have adopted this blueprint. Siemens, in particular, linked its Xcelerator platform with Omniverse so that factory design data can be reflected in simulation environments in real time.

- Power and cooling simulation: One notable aspect of the system is that it simulates not only robot movement paths, but also power consumption and cooling efficiency for data centers inside factories. To manage the massive energy demands of AI robots, NVIDIA integrates with Cadence’s Reality Digital Twin platform to optimize thermal management and airflow.

- Integration with Microsoft: As announced at Microsoft Ignite in November 2025, NVIDIA is working with Microsoft Azure to build an “AI Superfactory.” The goal is to run Omniverse on Azure cloud infrastructure and break down data silos between different 3D tools through the OpenUSD (Universal Scene Description) standard.

2.3 Winning the Edge and the ROS 2 Ecosystem

If the central large-scale model, Cosmos, acts as the brain, then Jetson Thor serves as the nervous system for robots operating at the edge.

- Project GR00T and Jetson Thor: At ROSCon 2025 in Singapore in October 2025, NVIDIA demonstrated Isaac ROS 4.0, the software stack for Jetson Thor, its chip designed specifically for humanoid robots. This allows robots to perform complex visual reasoning and control tasks at the edge, without relying on a cloud connection.

- Establishment of the Physical AI SIG: NVIDIA also launched a Physical AI Special Interest Group (SIG) within the Open Source Robotics Alliance (OSRA). As a group shaping the standards of ROS, the global robot operating system ecosystem, NVIDIA is positioning GPU acceleration as a core ROS 2 standard. It also open-sourced the Greenwave Monitor tool, which visualizes robot performance bottlenecks in real time, further strengthening the developer ecosystem.

3. The Evolution of the Cognitive Engine: Google’s Multimodal Reasoning and Agent Control

If NVIDIA is focused on simulating the physical world, Google and its research organization DeepMind are focused on giving robots the intelligence and software control needed to understand and execute complex instructions.

3.1 Gemini 3: Spatial Intelligence for Robotics

On Nov. 18, 2025, Google unveiled Gemini 3, its next-generation model. Gemini 3 is not simply a language model. It is built with powerful spatial reasoning capabilities designed with robotic control in mind.

- Spatial reasoning and nuance: Gemini 3 can understand ambiguous instructions such as “clean up that mess,” infer what “that mess” refers to, understand the three-dimensional structure of a room, and reason about where objects should be placed.

- Gemini Robotics: Google also introduced a robotics-specialized version of the general model, called Gemini Robotics. This model demonstrated few-shot learning capabilities, learning new tasks from as few as 100 demonstrations. That offers a meaningful path forward for one of robotics’ hardest problems: chronic data scarcity.

3.2 Project Antigravity: A New Paradigm for Agent Development

Alongside Gemini 3, Google announced a new development platform called Project Antigravity. It is a tool designed to fundamentally reshape the Physical AI development environment.

- AI agents are given the authority to control the code editor, terminal, and browser as a unified workflow.

- If a developer gives a natural language instruction such as, “Write a ROS 2 node that picks up the red ball while avoiding obstacles,” the agent inside Antigravity can write the code, test it in simulation, and revise it on its own if errors occur. It is an attempt to dramatically lower the barrier to entry for robot software development.

3.3 DeepMind’s Research on Visual Alignment

On Nov. 11, 2025, DeepMind published a paper in Nature titled “Teaching AI to see the world more like we do,” presenting research aimed at aligning robot vision systems with human cognitive structures.

- AligNet dataset: DeepMind built the AligNet dataset, which aggregates human judgment data across millions of images. Models trained on this dataset showed significantly improved robustness, recognizing the same object even when lighting conditions changed or the object was rotated.

- SIMA 2 and transfer from virtual worlds: DeepMind also introduced the SIMA 2 agent, trained in video game environments such as No Man’s Sky and Goat Simulator 3. The agent learned on its own how to use tools and navigate within those worlds, demonstrating that such capabilities can transfer to real-world robot navigation.

NVIDIA and Google both start from the same core assumption: real-world data is expensive.

NVIDIA addresses that constraint by generating effectively unlimited data in virtual environments through Cosmos. Google addresses it by finding ways to make robots smarter and more controllable with less data through Gemini 3 and Antigravity. These technologies will become critical infrastructure that determines how quickly robots move beyond the lab and into everyday life.

In Part 2, we’ll continue with Tesla, Amazon, Microsoft, Figure AI, and real-world hardware and industrial deployment cases.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑧ The Breakthrough for Physical AI? Robotics Training Reimagined by NVIDIA Cosmos

Hyun Kim

Co-Founder & CEO | 15 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.