Insight

⑧ The Breakthrough for Physical AI? Robotics Training Reimagined by NVIDIA Cosmos

Hyun Kim

Co-Founder & CEO | 2026/03/20 | 15 min read

![[Physical AI Series 8] NVIDIA: The Strongest Player in Physical AI?](https://cdn.sanity.io/images/31qskqlc/production/11bed83c4d8fb81a019587a69b534c7fc96ec4c9-2000x1125.png?fit=max&auto=format)

Physical AI is moving beyond language models and image generation into the real world—powering robots, autonomous vehicles, drones, and smart factories.

At its core, Physical AI mirrors how humans interact with the world through a continuous loop:

- Perception: understanding the environment through sensors (camera, LiDAR, etc.)

- Decision: reasoning over collected data

- Action: executing decisions through physical actuators (robot arms, legs, etc.)

But scaling Physical AI hits a fundamental bottleneck: the data gap.

1. The Real Challenge: Physical AI Needs a Different Kind of Data

Unlike LLMs trained on internet-scale text, Physical AI requires data grounded in real-world interaction. It’s not just about volume—it’s about structure:

- causal relationships

- physical dynamics

- spatiotemporal context

For example, it’s not enough for a model to know “a cup is on a table.” It must understand “if pushed, the cup will fall.” This makes real-world data collection slow, expensive, and often dangerous—which is why synthetic data is no longer optional—it’s essential.

2. A New Paradigm: World Foundation Models (WFM) to ‘Supercharge’ the Simulation

To address the data bottleneck in Physical AI, NVIDIA introduced World Foundation Models (WFM)—a new class of models designed to understand and generate synthetic representations of the physical world.

WFM goes beyond traditional simulation pipelines that rely on manually rendered 3D assets. Instead, it brings generative AI into the simulation layer, fundamentally changing how training data is created.

From Manual Simulation to Generative Simulation

Traditional approach (artisanal workflows):

- Developers manually build 3D environments using tools like Isaac Sim

- Assets are placed and configured one by one

- Agent behaviors (vehicles, pedestrians) are scripted explicitly

- Requires deep expertise in 3D modeling and simulation

- This process is labor-intensive, slow to scale, and difficult to iterate.

WFM-based approach (generative workflows):

- Developers provide prompts (text, image, or video)

- The model generates complete simulation scenarios automatically

- Environments, actors, and interactions are synthesized end-to-end

For example, when asked “Generate a 30-second scenario of a cyclist crossing an intersection on a rainy night,” The model constructs:

- the scene layout

- weather conditions

- dynamic actors

- temporal interactions and more

This represents a shift in content generation paradigm from ‘code writing and 3D modeling’ to ‘natural language prompting.’

Traditional pipelines required a complex and specialized toolchain, including:

- OpenStreetMap for map data

- Blender for 3D modeling

- JOSM for map editing

- USD pipelines for simulation compatibility

These workflows were inherently fragmented and accessible only to highly specialized 3D simulation engineers.

With Cosmos Predict 2.5, this paradigm changes entirely.

The model unifies text, image, and video generation into a single multimodal system, enabling scenario creation through natural language prompts. Even developers without 3D simulation expertise can now generate large-scale training data by specifying intent—for example:

“Generate 1,000 variations of a high-risk edge-case scenario.”

This abstraction layer removes the need for manual environment construction and significantly lowers the barrier to simulation-driven training.

At a systems level, World Foundation Models automate and democratize synthetic data generation. More importantly, they compress the end-to-end pipeline—from:

idea → synthetic data → trained policy

into a significantly shorter and more iterative loop.

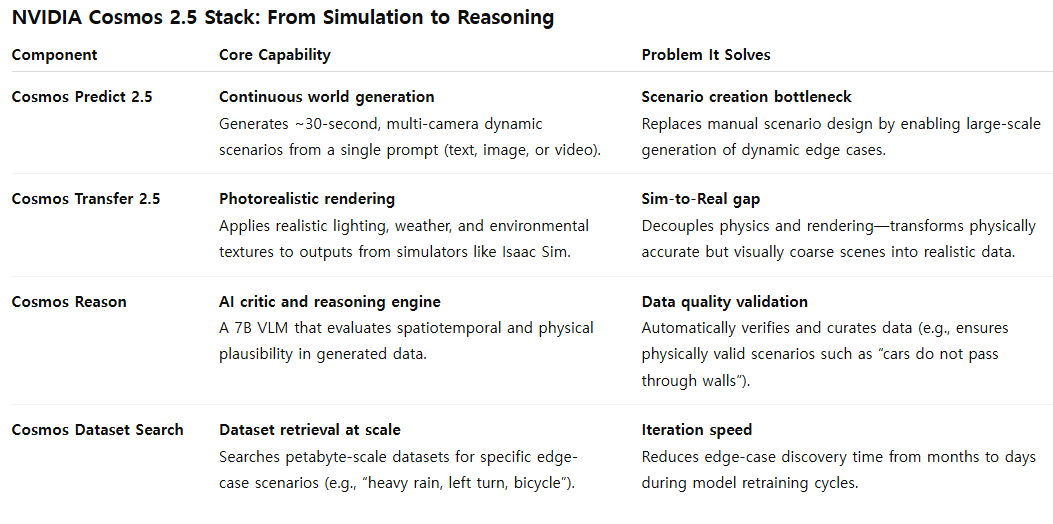

NVIDIA operationalizes this paradigm through Cosmos 2.5, a modular tool suite composed of four core components. Each module maps directly to a critical stage in the data pipeline:

- generation

- realism enhancement

- reasoning and evaluation

- retrieval

To better understand this stack, we can break down each component by its core functionality and the specific bottleneck it addresses.

Table 1: NVIDIA Cosmos 2.5 Stacks: From Simulation to Reasoning

3. Deep Dive: Dissecting the NVIDIA Cosmos 2.5 Stack

The four components of the Cosmos 2.5 stack are designed to work as an integrated system, addressing long-standing bottlenecks across the Physical AI development pipeline—from data generation to validation and iteration.

A. Cosmos Predict 2.5 — Generating ‘Continuous Worlds’

Cosmos Predict 2.5 serves as the generation engine of the stack.

Its core capability is to take a single input—text, image, or video—and generate a multi-view, temporally consistent video sequence (~30 seconds) that represents a coherent physical world.

From a systems perspective, Predict 2.5 consolidates previously separate models (Text-to-Video, Image-to-World, Video-to-World) into a unified, lightweight architecture, improving both efficiency and performance.

Why 30 seconds matter

The key breakthrough is not just generation—but temporal scale.

Earlier models (3–5 seconds):

- capture short-term physical dynamics

- model immediate cause-and-effect relationships

Predict 2.5 (~30 seconds):

- enables long-horizon scenarios

- captures intent, interaction, and decision-making over time

For example, instead of modeling a simple event like:

“a ball bouncing”

It can generate structured behavioral sequences:

- pedestrian approaches crosswalk

- detects oncoming vehicle

- hesitates

- observes vehicle deceleration

- decides to cross safely

This shift enables training data that supports:

- context-aware reasoning

- intent prediction

- multi-agent interaction modeling

In effect, it provides the minimum temporal unit required to move Physical AI from reactive systems to reasoning agents.

B. Cosmos Transfer 2.5 — Closing the Sim-to-Real Gap with Photorealism

Cosmos Transfer 2.5 functions as the realism engine, designed to bridge the Sim-to-Real gap.

It takes as input:

- structurally accurate but visually coarse 3D simulation outputs (e.g., Isaac Sim, CARLA)

And transforms them into:

- photorealistic video outputs, guided by prompts such as: “rainy night, wet road, foggy conditions”

Key architectural insight: decoupling physics and rendering

Transfer 2.5 reflects a deliberate pipeline design:

Step 1 — Physics (Simulation layer)

- Isaac Sim + physics engines generate accurate interactions

- prioritizes correctness over visual quality

Step 2 — Rendering (Post-processing layer)

- Transfer 2.5 applies realistic lighting, materials, and textures

This separation enables:

- faster simulation cycles

- higher visual fidelity

- scalable generation of diverse environments

Notably, despite being 3.5× smaller than its predecessor, Transfer 2.5 achieves:

- improved rendering quality

- better prompt alignment

- reduced error accumulation in long sequences

This makes it a practical and scalable solution for reducing the Sim-to-Real gap.

C. Cosmos Reason — ‘AI Critic’ that Goes Beyond Perception to Physical Understanding

Cosmos Reason acts as both the reasoning engine and the quality control system. It is a 7B parameter Vision-Language Model (VLM) designed for:

- multimodal perception

- spatiotemporal reasoning

- physical plausibility evaluation

Beyond detection: reasoning about the world

Rather than simply identifying objects:

“there is a car”

Cosmos Reason can infer:

“the car may lose traction on a wet surface and collide with a pedestrian”

This represents a shift from:

- perception → causal reasoning

AI as a data critic

Its most important role is in automated data curation.

Given that Predict and Transfer can generate massive volumes of synthetic data:

- a significant portion may be invalid or low-value

- manual inspection at scale is infeasible

Cosmos Reason solves this by:

- evaluating physical consistency

- filtering out invalid scenarios

- scoring data quality

Delivered as a NIM (NVIDIA Inference Microservice), it can be easily integrated into existing pipelines without heavy infrastructure overhead.

D. Cosmos Dataset Search — Accelerating Iteration Cycles from ‘Months’ to ‘Days’

Cosmos Dataset Search provides data retrieval and debugging capabilities at scale.

Its primary function:

- search large-scale datasets (synthetic + real)

- retrieve specific edge-case scenarios via natural language queries

From months to days

Traditionally:

- reproducing failure cases required

- manual simulation

- extensive log analysis

- long iteration cycles (months)

With Dataset Search:

- developers query scenarios directly, such as “heavy rain, unprotected left turn, bicycle”

- relevant data is retrieved in days, not months

Enabling data-centric iteration

This enables a closed-loop workflow:

- model fails

- failure scenario is identified

- matching data is retrieved

- dataset is updated

- model is retrained

This transforms Physical AI development into a fully data-centric MLOps loop, where iteration speed is driven by data accessibility rather than manual debugging.

4. In Practice: Skild AI and Serve Robotics

NVIDIA positions Cosmos not as a research prototype, but as part of a production-ready pipeline:

NuRec (real-world capture) → SimReady Assets → Isaac Sim → Cosmos (data generation & augmentation)

This end-to-end workflow is already being adopted by leading robotics companies.

A. Case 1: Skild AI—Scaling Toward a General Robot Brain

Skild AI is building a general-purpose robotics foundation model that can operate across different embodiments:

- humanoids

- quadrupeds

- robotic arms

This goal introduces a core challenge:

data diversity at scale

Manual simulation workflows cannot generate the breadth of data required for such generalization.

Their approach

Isaac Lab

- large-scale reinforcement learning environment

- trains thousands of robot instances in parallel

Cosmos Transfer

- augments simulation data across environmental dimensions:

- lighting

- textures

- backgrounds

Outcome

- significantly improved robustness

- generalization across environments and embodiments

As Skild AI CEO Deepak Pathak summarizes:

“NVIDIA Isaac Lab and Cosmos enable the generation of scalable data sources required for robots to learn from experience.”

This highlights a key point:

large-scale synthetic data generation is a prerequisite for general robotics intelligence

B. Case 2: Serve Robotics — A Production-Grade Hybrid Data Strategy

Serve Robotics operates over 1,000 delivery robots in real urban environments, with plans to scale further. Their approach provides a clear blueprint for mature Physical AI systems.

The core strategy: hybrid data

Instead of choosing between simulation and real-world data, they combine both:

Real-world data

- 1 million miles of driving per month

- ~170 billion image + LiDAR samples

- used to identify failure cases

Synthetic data (Isaac Sim + Cosmos)

- reproduces rare and dangerous edge cases

- enables large-scale controlled training

Role separation

Their pipeline effectively separates responsibilities:

- Real data → problem discovery

- Synthetic data → problem solving

For example:

- a rare failure observed once in real-world data

- can be reproduced millions of times in simulation

Key insight

The winning strategy is not real vs synthetic but real + synthetic working together

5. Conclusion: Cosmos Solves Data Generation—But Shifts the Bottleneck to Data Curation

The introduction of the NVIDIA Cosmos 2.5 stack marks a clear inflection point in Physical AI development. By leveraging World Foundation Models, NVIDIA has effectively addressed one of the field’s most persistent bottlenecks: data generation at scale.

However, solving data scarcity introduces a new and equally challenging problem—data deluge. We are now entering a phase where:

- Real-world systems generate hundreds of billions of samples

- Generative models like Cosmos can produce virtually unlimited synthetic scenarios

In this environment, the central challenge is no longer generating more data. Instead, the focus shifts to a more critical question:

How do we identify, prioritize, and curate the small subset of data that actually improves model performance?

In other words, the competitive edge in Physical AI is moving from data quantity to data quality and selection.

NVIDIA is clearly aware of this shift. The inclusion of Cosmos Reason and Cosmos Dataset Search reflects a deliberate attempt to address this emerging bottleneck.

- Cosmos Reason acts as an automated evaluator, filtering out physically invalid or low-value data

- Dataset Search enables rapid retrieval of relevant edge cases for debugging and retraining

Together, these components provide essential capabilities for navigating large-scale datasets. However, they remain modular components, not a complete system for managing end-to-end data workflows.

In real-world production environments, the problem is inherently more complex.

Organizations must simultaneously manage:

- synthetic data generated by Cosmos

- simulation data from platforms like Isaac Sim

- real-world operational data collected from deployed systems

These heterogeneous data sources must be:

- unified

- cleaned

- labeled

- continuously fed back into training pipelines

This is not a single-tool problem—it is a data infrastructure problem.

This is where Superb AI’s role becomes strategically significant.

If Cosmos can be understood as a high-performance data generation engine, then the Superb AI platform functions as a data-centric MLOps hub—a system designed to orchestrate the entire lifecycle of data across sources.

Rather than focusing solely on generation, Superb AI addresses the next critical layer: how data is managed, refined, and transformed into model performance.

At the core of this approach is Superb Curate, which directly targets the data curation bottleneck introduced by large-scale generation.

Instead of treating all data equally, Superb Curate:

- analyzes dataset distributions

- identifies class imbalance and scenario bias

- surfaces rare but high-impact edge cases

- detects label noise

Through this process, large volumes of raw data are distilled into high-value training datasets—datasets that meaningfully improve model robustness and generalization.

Ultimately, Cosmos has opened the door to an era where data generation is no longer the limiting factor.

But generating data is only the first step.

The organizations that succeed in Physical AI will be those that can:

- systematically filter signal from noise

- prioritize the right data

- and operationalize it within a continuous training loop

World Foundation Models like Cosmos and data-centric MLOps platforms like Superb AI are not competing solutions.

They are complementary layers in the same stack.

Together, they define a new paradigm for Physical AI—one where scalable intelligence is not just generated, but curated, structured, and continuously improved across the boundary between simulation and reality. In this journey of addressing complex data issues and accelerating Physical AI success, Superb AI will be your best partner.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.