Insight

How to Think Through Your Training Data Workflows Like an ML Expert

Hyun Kim

Co-Founder & CEO | 2020/07/01 | 8 min read

I. Introduction

Since the advent of computing, and more so with the proliferation of modern databases, most of our computing needs have relied primarily on “structured data”. However, over time we have steadily entered a new data era, in which unstructured data such as image, video, text, and audio vastly outnumber that of structured data in the overall digital data-verse. Furthermore, Gartner estimates that over 80% of enterprise data is unstructured and this is growing at 55-60% every year. The future lies in unleashing the insights within unstructured data.

Relational databases and other forms of formatted and categorized data, which can be easily stored in a traditional column-row database or spreadsheets like a Microsoft Excel table, are still important. But their long and exclusive reign as the peak of data value is rapidly getting undermined by the pervasiveness and ubiquity of unstructured data. Increasingly, the critical measure of data “value” today predominantly accrues from “unstructured data” that encompasses everything we normally use from documents to images to video and audio streams to social media posts.

But the very nature of unstructured data poses a rudimentary challenge as we cannot easily interpret, search, or analyze it with a computer in the traditional sense. We inevitably require tremendous manual human input to parse, tag, and interpret the given data into a machine-interpretable structured format. Not surprisingly, many organizations still remain hesitant to work with it until recent years.

During 2018, storage suppliers added more than 700 exabytes of storage capacity to the worldwide installed base of all storage media types, according to IDC. From 2018 to 2023, the worldwide installed base of storage capacity will more than double, reaching 11.7 zettabytes in 2023, IDC predicts. If anything, the daunting volumes of unstructured data that have already been collected routinely discourage less experienced teams from even attempting to mine it for useful information and purpose. Until very recently, there has been a lack of available technologies capable enough to extract business value from this diverse yet nebulous source of digital truth.

This isn’t the case anymore. We now have access to many new and innovative data analytics tools powered by artificial intelligence that are created specifically to access the insights available from unstructured data. There is a flourishing ecosystem being born that is aspiring to fill big and important gaps in ML Ops, a rather nascent concept on its own. However, there is a growing concern amongst ML experts that to fully realize the potential of unstructured data, it’s not enough to just employ the latest technology. In fact, organizations need to knock down operational silos in favor of a highly scalable, massively parallel data hub approach that is designed not just to label and store training data but to also allow ML orgs to collaborate, share and, most importantly, seamlessly iterate to produce enterprise-level AI.

This is why ML teams need more than makeshift point solutions when attempting to create enterprise-grade training data pipelines. Instead, ML orgs should require platform sophistication that addresses the need for collaboration, speed, and quality in order to produce and manage quality datasets with the goal of quickly developing production-grade models.

To facilitate ML teams as they embark on this rewarding journey towards building great AI, we have attempted to gather and answer the most important questions as well as provide a handful of cutting edge frameworks to help structure training data processes. By employing some of the tactics and strategies expressed in this paper, ML teams will be able to assess their current systems and build an understanding of how a data platform can become a much more integrated piece to the overall ML Ops workflow. Before we dive into the4 key pillars an ideal machine learning data platform must address, we will start off with an introduction to the basics of a Machine Learning Life Cycle and the key questions to ask yourself to successfully deploy a production-grade ML system.

II. Machine Learning Life Cycle

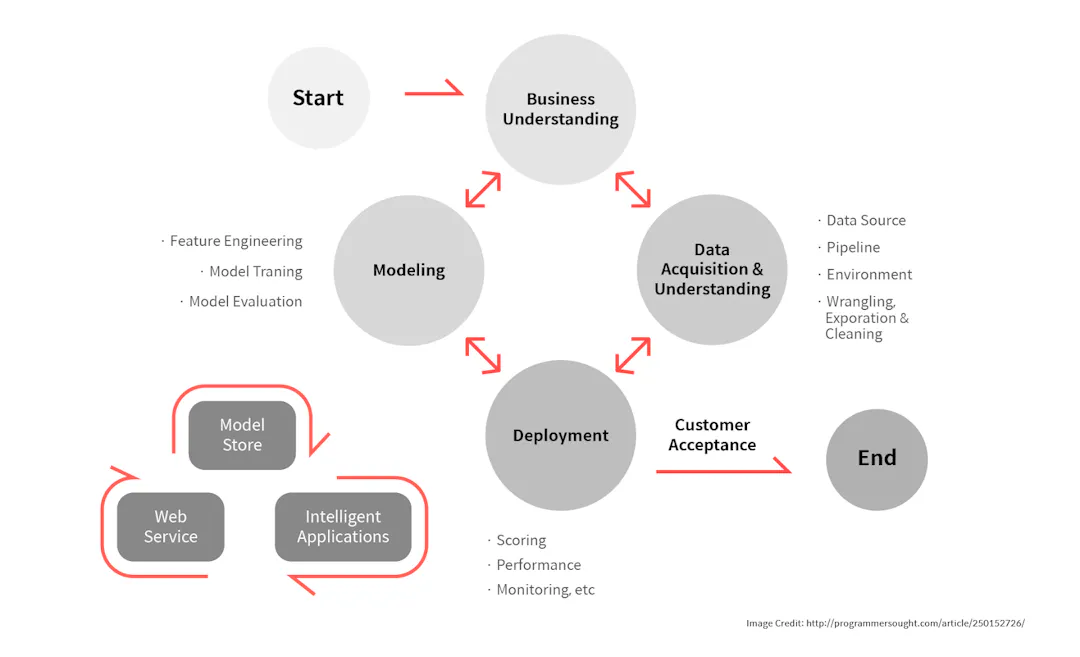

In the past, machine learning systems were naively developed and deployed in a relatively simple process. But nowadays the machine learning lifecycle is much more complex. As datasets require continuous updates and contributions from multiple personas, a new machine learning data platform is needed to support such a workflow.

Machine Learning Development

A simplistic view of how ML systems were developed in the past

Enterprises have been recording and storing data for years. From scanned documents to user activity logs, these files were not stored specifically with training machine learning models in mind. Yet with rapid advancements in deep learning, many enterprises still tried to put them to use. We’ve even seen attempts to create an ML system sprout from genuine yet nebulous questions like “we have so much data stored — how can we use them?” or “we have some data, so let’s try doing something with machine learning”.

As a result, most early, naive approaches to building a machine learning system has been linear and sequential, and they did not deviate much from the following series of steps:

- Organize and clean existing raw data (image, video, text, etc.)

- Annotate raw data and create a training dataset

- Train a machine learning model

- Deploy the trained model

Machine Learning Life-Cycle of Today

As it turns out, the past was much simpler. The machine learning life cycle of today is much more complex. In fact, having a collection of data is hardly enough to feel qualified for today’s ML projects.

Here is the list of questions we must routinely ask even before we can scope out any ML project. And with growing complexity and innovation in the AI space, this list will keep growing. While answering such questions may require too much detail for someone starting out with their ML project, it’s important to grasp the expanse of what is possible. Here is a shortlist of the most meaningful questions an ML expert generally poses before jump-starting a machine learning project.

17 Key Questions to Ask Before Embarking on Your Next ML Project

Business Understanding

- What do you expect to gain from the machine learning system?

- What are the exact application scenarios and the expected business impact?

- Do you understand the expected performance and limitations of using an ML?

- How will you monitor and measure the performance of ML models?

Modeling

- What machine learning model will you use?

- What are your requirements for performance, in terms of computing speed (inference speed), accuracy, precision, and recall?

- What are your requirements for training? Will you rely on a cloud computing server? Will you continuously update your model?

Data Acquisition & Understanding

- Do you have enough data to train your said model? If not, how will you collect additional data? Will you crowdsource, web-crawl, or purchase pre-made datasets?

- What are the legal implications of your data source? Are they copyrighted?

- Will you implement data augmentation techniques?

- Can your use-case resort to synthetically generated data?

Data Labeling

- Who will label your data? Do you have your own in-house data labeling team? Will you outsource to a labeling agency?

- Which data labeling tool will you use? Does it support whatever functions you need, such as visualization, statistics, version control, and multi-person collaboration?

- Will you use pre-trained machine learning models to speed-up the labeling process? If so, do you have access to training the said model? How often will you be re-training this model?

Model Training

- After you build the dataset, what infrastructure will you use to train the models? In-house GPU servers or cloud servers?

- Do you need access to advanced hardware, i.e. TPUs?

- Will you train the models internally or will you use third-party model training services? Will you automate the hyper-parameter tuning and architecture search (Auto-ML)?

These are just a small subset of questions that must be answered to train and deploy a production-grade machine learning system. And to summarize the most important part – it doesn’t end here.

Once you have your first prototype ML model, you would then need to analyze and debug wherever your model performed poorly and continuously iterate through the ML development cycle.

Closing Thoughts

It is widely known that implementing AI solutions at scale can be extremely challenging. Deeplearning.ai reports that “only 22 percent of companies using machine learning have successfully deployed a model”.

We can also agree that a large part of this challenge undoubtedly stems from fragmentation in the ML workflow and teams being siloed in different walled gardens that increase the complexity of collaboration without necessarily improving the quality or speed of the output. This can be extremely frustrating to contend with and can severely delay or completely inhibit teams from continuously updating and maintaining high-quality data, as well as training and deploying production-level ML models. Also, many resource-conscious ML teams feel overwhelmed by having to wade through a sea of highly technical and confusing software solutions and going through the learning curve when attempting to build ML Ops infrastructure. Therefore, at Superb AI, we are motivated to synthesize our best insights and practices gathered from decades of ML experience and deliver solutions that can empower ML teams and reinvent their ML development cycle, re-shifting focus back to building amazing AI technology rather than the workflow tools themselves.

This underlying mission to democratize training data and ultimately machine learning development is at the heart of our platform at Superb AI.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.