Insight

⑤ From Seeing to Governing Actions: The Technological Revolution from Vision AI to Physical AI

Hyun Kim

Co-Founder & CEO | 2026/03/03 | 7 min read

![[Physical AI Series 5] Why Now Is the Moment for Physical AI](https://cdn.sanity.io/images/31qskqlc/production/89f10c7aa758858705e7ba85b8f17f81662f0b67-2000x1125.png?fit=max&auto=format)

The generative AI revolution—led by ChatGPT and Midjourney—has largely remained in the digital world. But AI is now crossing the boundary into the physical world through Physical AI, also known as Embodied AI.

Physical AI refers to next-generation AI that perceives the physical environment through sensors, makes intelligent decisions, and directly intervenes and interacts with the real world through an embodiment—such as robots, autonomous vehicles, and drones. It operates in a continuous loop of perception → decision → action, going beyond content generation to perform real, measurable actions.

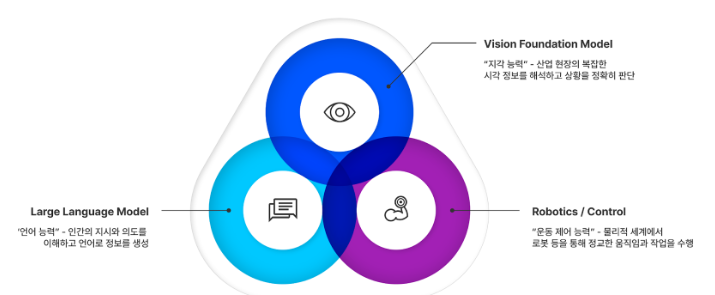

(Components of Physical AI: Perception/Judgment → Reasoning → Action)

This shift will directly reshape the $60 trillion physical economy—including manufacturing, logistics, health care, and construction. Physical AI has the potential to automate complex manual labor once considered impossible, ease severe labor shortages, and protect human safety in hazardous working environments.

But what is the most fundamental prerequisite for turning this vision into reality? The machine’s “eyes.” Without Visual Intelligence—the core sense that enables Physical AI to understand and act in the real world—even the most advanced robot becomes ineffective.

Drawing on a presentation by Superb AI CEO Hyun Kim at Korea’s National IT Industry Promotion Agency (NIPA), this post examines why success in the Physical AI era depends on Vision AI—and how Superb AI is addressing this core challenge to help shape the future of industry.

Why Physical AI, and Why Now: Market Pull Meets Technical Breakthroughs

Physical AI has become an urgent topic—not a distant future—because explosive market demand and enabling technical breakthroughs are accelerating at the same time.

An Exploding Market

According to a global market analysis, the Physical AI market is expected to grow from approximately $4.4 billion in 2025 to over $23 billion by 2030, expanding at an impressive 39% CAGR. This growth reflects surging demand for human-robot interaction across society—from industrial automation and logistics innovation to medical and caregiving services.

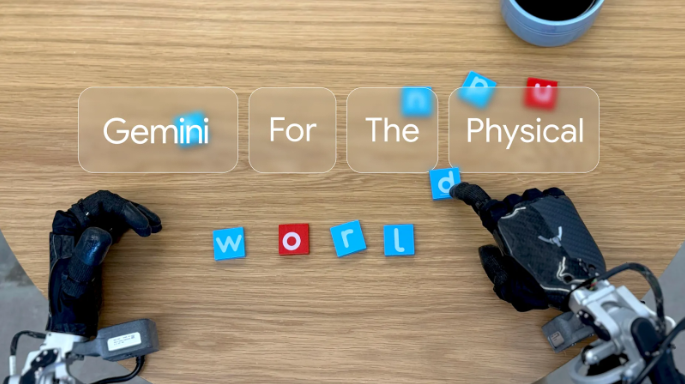

“General-Purpose Robot Brains”: The Rise of Robotics Foundation Models

Traditional robots had a fundamental limitation: they had to be individually programmed for specific tasks. But Robotics Foundation Models (RFMs) are now changing that paradigm. Trained on massive-scale datasets, a single large foundation model can serve as a general-purpose “brain” that transfers across different robots and a wide range of tasks.

The emergence of general-purpose models—such as Google DeepMind’s Gemini Robotics and NVIDIA’s Project GR00T—is accelerating the mainstream adoption of Physical AI. And as these universal “brains” spread, demand is surging for standardized, high-performance vision systems that enable them to perceive the real world accurately. This is precisely where Superb AI’s role as a Vision AI specialist becomes critical.

The “Eyes” of Physical AI: Why Vision AI Is the Core Enabler

For Physical AI to perform meaningful “actions” in the physical world, it requires sophisticated motion systems—such as motors and actuators. But for those systems to move correctly, they must first understand the surrounding environment with precision.

All senses matter. Yet in a complex, dynamic real world designed around humans, the most information-rich and intuitive signal is camera-based vision. That is why Physical AI relies on multiple sensors—cameras, LiDAR, and radar—to perceive the world. For robots to read work instructions, identify specific products, understand subtle human behaviors, and collaborate with coworkers, they need visual perception capabilities that most closely mirror how humans see and interpret their environment.

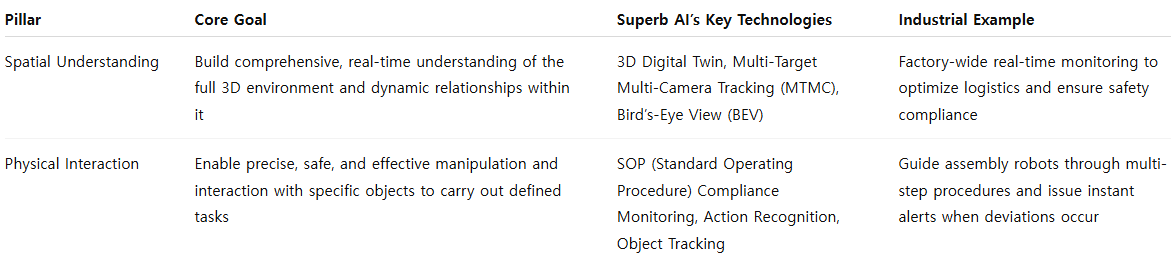

As a Vision AI specialist, Superb AI aims to provide the “eyes” for Physical AI—enabling machines to see, understand, and interact with the world with clarity. To do so, Superb AI is developing Physical AI’s visual intelligence across two core pillars:

- Spatial Understanding: The ability for robots or autonomous vehicles to comprehend the entire surrounding environment in 3D and interpret relationships among objects—in other words, to read the context of a situation.

- Physical Interaction: The ability to directly interact with specific objects in front of the machine to execute a defined task—in other words, to perform precise work.

These two pillars represent Superb AI’s clear roadmap for systematically addressing the complexity of visual intelligence in Physical AI.

Strategy 1: Spatial Understanding — Building a “World Model” for Physical AI

For Physical AI to work successfully, it must first develop a comprehensive understanding of the environment it operates in. Superb AI addresses this challenge with technologies that build a precise 3D digital twin using only existing CCTV footage or smartphone video. By replicating the real world in a virtual space—without expensive specialized equipment—organizations can simulate operations and optimize workflows, dramatically lowering the barrier to adoption.

Superb AI also provides core capabilities that integrate multiple camera streams to understand the full situation end-to-end:

- 3D Reconstruction: Reconstructs an entire space as a 3D digital twin using only standard 2D video captured by general cameras—without specialized LiDAR sensors. This allows organizations to leverage existing infrastructure to build virtual simulations, optimize operations, and create robot training environments without major added cost.

- Multi-Target Multi-Camera Tracking (MTMC): Tracks people or vehicles seamlessly across multiple camera views, enabling complete visibility into movement paths across large spaces.

- Bird’s-Eye View (BEV): Converts multiple 2D camera feeds into a unified top-down planar map—supporting path planning for autonomous vehicles and robots.

This direction aligns with the goal of world foundation models—such as NVIDIA Cosmos—that generate virtual worlds to train AI. Superb AI delivers practical solutions that combine the intelligence of these large-scale models with real industrial video, creating immediate operational value.

Strategy 2: Physical Interaction — Controlling the “Body” of Physical AI

Once a system understands a broad environment, the next step is creating value through precise interaction with specific targets. A typical use case is SOP (Standard Operating Procedure) compliance monitoring. In manufacturing, ensuring product quality and worker safety depends on executing defined procedures accurately.

Superb AI’s Vision AI analyzes worker behavior in real time through cameras. It recognizes both the parts being handled and the actions being performed through Object Tracking and Action Recognition, and increases precision by incorporating Vision-Language Models (VLMs). A VLM can understand SOP steps described in language, then compare them in real time against the worker’s movements in video—issuing instant alerts when deviations occur. This overcomes the limits of manual inspection and directly contributes to reducing defects, lowering costs, and preventing safety incidents.

So how do robots learn to perform complex work like this? The answer lies in simulation. Training robots on real factory lines is not only time-consuming and expensive—it can also cause mechanical wear and failures through repeated trial-and-error.

Robot simulation platforms such as NVIDIA Isaac Sim solve this challenge. Isaac Sim provides a highly realistic virtual environment (a digital twin), enabling robots to learn across countless scenarios without physical constraints. In simulation, robot motion can be accelerated by thousands of times—producing far more attempts (data) in a short period—and training can run 24/7 without concerns about mechanical fatigue. Applying AI models trained extensively in virtual environments to real robots through a Sim-to-Real approach is becoming the standard for maximizing efficiency and safety in Physical AI development.

(Example: Virtual factory environment built with Isaac Sim; forklift navigation scene. Source: NVIDIA.)

The Data Engine Accelerating the Physical AI Revolution: Superb AI’s MLOps Platform

At its core, the Physical AI revolution is a data-centric AI revolution. Competitive advantage is determined not by having the “best algorithm,” but by the ability to continuously supply, manage, and improve higher-quality data over time.

This is where Superb AI’s MLOps platform plays a central role. The platform is an integrated solution that manages the entire data lifecycle required for AI development:

- Data Build and Processing: Improves labeling efficiency by up to 10× through automation tools such as Custom Auto-Label.

- Data Curation and Model Management: Identifies the most performance-critical data, then enables no-code model training, diagnosis, and deployment—supporting continuous performance improvement.

- Vision Foundation Model “ZERO”: Recently introduced, ZERO can be deployed on-site with a relatively small amount of data—dramatically reducing the time and cost required for data building.

Ultimately, Superb AI’s MLOps platform becomes a core infrastructure layer—and a strategic asset—that enables companies to build Physical AI not as a one-off pilot, but as a scalable, repeatable engineering process.

In the Physical AI Era, the Ability to “See” Will Decide the Future

The next wave of the AI revolution is moving toward physical reality. Physical AI will bring intelligence to robots and autonomous systems—reshaping the foundations of industries such as manufacturing, logistics, and safety. At the center of this transformation is a powerful AI “brain.” But for intelligence to matter in the real world, it must ultimately rely on the most fundamental sense: vision.

Superb AI offers a clear strategy to solve this core “vision problem”:

- Spatial Understanding provides machines with broad situational awareness.

- Physical Interaction gives them a sharp focus to act precisely on specific targets.

These two capabilities come together on top of a robust, data-centric MLOps platform—helping organizations turn the Physical AI vision into reality.

Tomorrow’s factories and logistics centers will see, understand, and make optimal decisions on their own. Superb AI is building the “eyes” for this intelligent future industry.

Talk with Superb AI experts today to explore what Physical AI adoption could look like for your organization.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.