Insight

⑥ Google Gemini Robotics and NVIDIA Newton: The Frontline of the Physical AI Revolution

Hyun Kim

Co-Founder & CEO | 2026/03/16 | 7 min read

![[Physical AI Series 6] Physical AI Today: Google & NVIDIA](https://cdn.sanity.io/images/31qskqlc/production/ced9f9c80b110010f8a8a747aef6fc5ac1d65794-2000x1125.png?fit=max&auto=format)

Recent announcements from global tech leaders such as Google and NVIDIA signal something larger than a wave of new product launches. They mark the beginning of the Physical AI era.

This shift represents a foundational moment for robotics and autonomous systems. The core technologies needed to develop intelligent machines at scale are finally coming together—transforming Physical AI from a research topic into a force capable of reshaping entire industries.

But what exactly is Physical AI?

Unlike traditional AI systems that operate purely in digital environments, Physical AI—also known as Embodied AI—interacts directly with the real world through physical systems such as robots, drones, and autonomous vehicles. These systems operate through a continuous loop of perception → reasoning → action.

Sensors such as cameras and LiDAR allow machines to perceive the environment. Advanced AI models interpret and reason about what they observe. Actuators—motors, robotic arms, and mobility systems—then translate those decisions into physical actions. This cycle enables machines to navigate the unpredictable complexity of real-world environments.

The economic potential of this emerging field is enormous. Goldman Sachs estimates the humanoid robot market could reach $38 billion by 2035, while Morgan Stanley projects it could grow to $5 trillion by 2050. The scale of investment and technological competition ahead reflects just how transformative this market could become.

Interestingly, the recent moves by Google and NVIDIA are not direct competitors so much as complementary pillars of the same ecosystem. One is building the robot’s brain, while the other is building the world where robots learn and operate.

1. Gemini Robotics: A Robot That Thinks with Two Brains

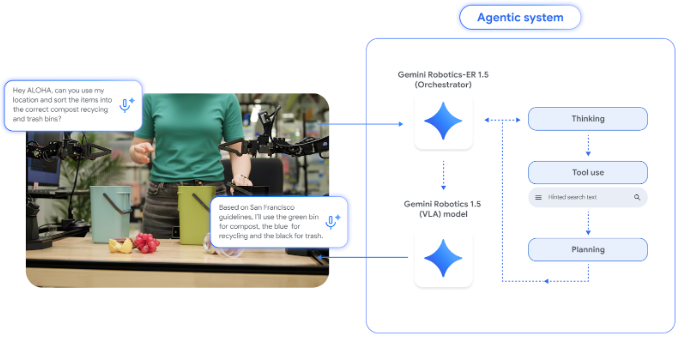

In September 2025, Google DeepMind introduced Gemini Robotics 1.5, a new AI system designed specifically for robotics. One of its defining features is a dual-brain architecture.

The first component, Gemini Robotics-ER 1.5, acts as the robot’s high-level reasoning engine. It interprets instructions, plans tasks, and can even search the web to gather contextual information.

Imagine a robot receiving the instruction:

“Sort these items into recycling, general waste, and food waste according to local regulations.”

The ER model would first retrieve the relevant recycling rules for that region and analyze the surrounding objects to generate an execution plan.

The second component, Gemini Robotics 1.5, converts that plan into real-world actions using a Vision-Language-Action (VLA) model. Before executing a task, the system internally generates a natural-language reasoning chain explaining its decision process, making the robot’s behavior more interpretable to humans.

(Architecture of the Gemini Robotics 1.5 system. Source: Google DeepMind)

One of the most significant breakthroughs is the system’s cross-platform capability transfer. Skills learned on one robot can be transferred to entirely different robot platforms without retraining.

For example, tasks learned using the ALOHA 2 robot can be executed by robots such as Apollo humanoids or Franka bipedal systems. This ability addresses a long-standing challenge in robotics known as the embodiment problem, where each robot traditionally required its own model training pipeline.

By enabling knowledge transfer across different robotic bodies, Gemini Robotics 1.5 could dramatically accelerate the development cycle for robotics applications.

Google describes this as a step toward robots that move beyond repetitive automation—toward systems that understand context, use tools, and plan tasks autonomously. Many see it as an early milestone on the path toward physical-world AGI.

2. NVIDIA Isaac and Newton: Building the “Matrix” for Robots

While Google is advancing the cognitive layer of robotics, NVIDIA is building the infrastructure layer that allows robots to learn and operate.

At CoRL 2025, NVIDIA introduced several technologies designed to accelerate robotics research. One of the most notable announcements was Newton, a new open-source physics simulation engine developed in collaboration with Disney Research and Google DeepMind. Newton is integrated into the NVIDIA Isaac Lab robotics platform.

The new engine enables highly realistic simulations of complex physical interactions—such as walking on snow or gravel, or performing delicate object manipulation tasks that were previously difficult to simulate accurately.

Research institutions and robotics companies—including ETH Zurich, the Technical University of Munich, and Peking University—have already begun adopting the engine to build realistic digital twin environments.

NVIDIA also introduced Isaac GR00T N1.6, an open robot foundation model designed to bring human-level reasoning capabilities to robotic systems. GR00T allows robots to interpret ambiguous natural-language instructions and break them into step-by-step action plans using contextual reasoning and prior knowledge.

For example, an instruction such as “open the heavy door” requires understanding not only the action itself but also how to balance the robot’s body and apply force appropriately. GR00T enables this kind of contextual reasoning.

The model integrates Cosmos Reason, an open vision-language system that helps robots generate additional knowledge when encountering unfamiliar situations. It can also create and annotate large volumes of synthetic data to improve training.

Together, NVIDIA’s stack combines three critical components:

- Robot brain — GR00T foundation model

- Robot body simulation — Newton physics engine

- Training environment — Omniverse-based Isaac Lab

This unified platform allows models trained in simulation to be rapidly deployed onto real robots.

Synthetic Worlds for Training Robots

NVIDIA also expanded its Cosmos world foundation models (WFM) to accelerate the training of Physical AI systems.

Models such as Cosmos Predict 2.5 and Cosmos Transfer 2.5 can generate virtual environments using text, image, or video prompts. These systems can:

- generate multi-view videos up to 30 seconds long

- synthesize realistic images from 3D simulation environments

- create large volumes of synthetic training data

(Example application of NVIDIA Cosmos model)

This capability helps solve one of the biggest bottlenecks in robotics development: data scarcity. Many real-world training scenarios are expensive, dangerous, or simply impossible to capture at scale. Synthetic environments provide a practical alternative.

NVIDIA’s Physical AI stack—spanning simulation, AI models, and data generation—is laying the groundwork for the next generation of autonomous robots.

The Emerging Physical AI Ecosystem

Taken together, the strategies of Google and NVIDIA reveal a broader pattern. Google is building a universal reasoning layer—the “mind” of robots. NVIDIA is building the physical and technical foundation—the “body and world.” This complementary approach lowers barriers across the robotics ecosystem and accelerates innovation across the industry.

Only a few years ago, robots were largely limited to repetitive automation tasks. Today, they are evolving into intelligent agents capable of perception, learning, and collaboration with humans.

Google’s Gemini Robotics demonstrates autonomous reasoning and cross-platform learning. NVIDIA’s open simulation platforms and robot foundation models provide the infrastructure for training these systems at scale.

Both efforts point toward the same destination: Physical AI—machines that can understand and act within the real world. For the industry, this shift could drive significant improvements in productivity, safety, and entirely new business models. At the same time, it highlights the growing importance of AI development infrastructure. Without platforms capable of handling data pipelines, large-scale model training, and deployment, it will be difficult for any single organization to keep pace with the rapid evolution of Physical AI.

Superb AI is contributing to this ecosystem with its Vision Foundation Model ZERO and MLOps platform, supporting the development and deployment of AI systems across real-world environments. As the building blocks of Physical AI mature—robot perception, reasoning, and large-scale simulation—the vision once seen only in science fiction, from robot assistants to fully autonomous smart factories, is moving steadily closer to reality.

For organizations across industries, now is the time to start preparing: identifying where AI models and infrastructure strategies can deliver real operational impact. In the coming era of Physical AI, robots that can think and act will become new partners in both our daily lives and industrial environments. Partner with Superb AI to spearhead the era.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.