お知らせ

Superb AI、NVIDIAのフィジカルAIエコシステムに主要Vision AIパートナーとして参画

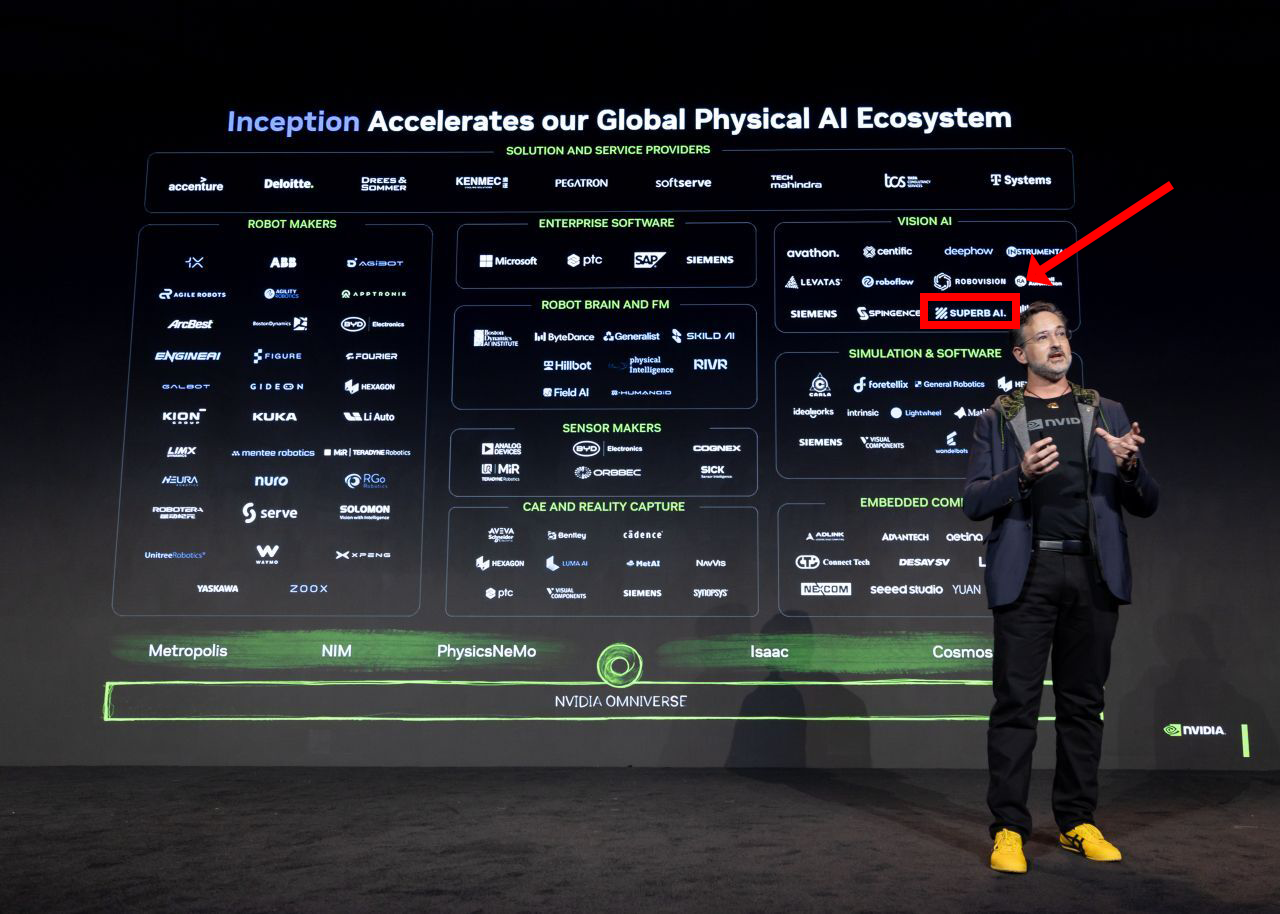

1. フィジカルAIにも「ChatGPTモーメント」が到来します 2026年1月、米ラスベガスで開催された世界最大級の技術見本市CES 2026で、NVIDIAのCEOであるJensen Huang氏は基調講演で「ロボティクスの“ChatGPTモーメント”が到来した(The ChatGPT moment for robotics is here)」と宣言し、 この発言は、単にロボティクスの進歩を指すレトリックではありません。テキストや画像を生成していたAIが、物理法則を理解し、複雑な現実世界を認識し、ロボットという身体を通じて物理的なタスクを遂行する「行動する知性」へと進化していることを意味します。過去のロボットが事前にプログラムされた活動に従う自動化機械だったとすれば、フィジカルAI時代のロボットは学習し、推論し、予期せぬ状況に適応する自律エージェントです。 Superb AIは、この大きな技術潮流の中心にいます。CES 2026でNVIDIAの主要フィジカルAIパートナーの1社として紹介されたSuperb AIは、フィジカルAIが世界を正しく「見て」、適切な判断を下せるようにする中核のVision AI技術を提供します。NVIDIAのエコシステムの中でロボットメーカーやエンタープライズソフトウェア企業と連携し、研究室にとどまっていたAI技術を産業現場と日常へと橋渡しする役割を担っています。 本記事では、CES 2026で発表されたNVIDIAのフィジカルAI戦略を深掘りし、Superb AIが定義するフィジカルAIの本質、コア技術、グローバルパートナーシップによるエコシステム拡張戦略を包括的に取り上げます。さらに、実際の産業現場での適用事例を通じて、フィジカルAIがもたらす将来の変化を具体的に展望します。 2. フィジカルAIエコシステムの深掘り:NVIDIAの青写真と主要コンポーネント 2.1 NVIDIAフィジカルAIエコシステムの構造 フィジカルAIエコシステムを育成するため、NVIDIAはスタートアッププログラム「NVIDIA Inception」を運営しています。CES 2026では、NVIDIA InceptionでフィジカルAIをグローバルに統括するLes Karpas氏が、フィジカルAI時代を牽引する主要パートナーを公開しました。このエコシステムは、フィジカルAIが単一企業だけでは実現できず、ハードウェア、AI(「頭脳」)、そしてAIを産業へ実装するソリューションが一体となって成立する統合システムであることを示唆しています。 NVIDIAのエコシステムマップは、大きく「ハードウェア」「AI」「ソリューション」の3つの中核領域に分けられ、それぞれが密接に相互依存しながら機能します。 2.1.1 ハードウェア:ボディ、感覚、そしてコンピューティング ここはフィジカルAIの物理的な基盤を形成する領域です。ロボットメーカーが作る「ボディ」に、センサーメーカーの「感覚器官」が加わり、エッジコンピューティングがリアルタイム処理を支えます。 Robot Makers: Sensor Makers: Embedded Computing: 2.1.2 AI(頭脳):認知、思考、そしてシミュレーション ここはハードウェアに知能をもたらすコアのソフトウェア/モデル層です。Superb AIが属するのもこの領域で、ロボットが世界を理解し、学習し、最適な行動を計画できるようにします。 Vision AI(視覚知能): 行動計画・ロボット制御のためのAI: シミュレーション/ソフトウェア: 2.1.3 ソリューション:産業統合とサービス ここは完成したフィジカルAI技術を、実際のビジネス環境へ統合し、運用できるようにするインフラ/サービス層です。 ソリューション/サービス提供企業: エンタープライズソフトウェア: 3. Superb AIのコア技術:NVIDIAエコシステムで中核を担う理由 前述のNVIDIAエコシステムマップで、Superb AIは「Vision AI(視覚知能)」領域の主要パートナーとして位置付けられています。フィジカルAIが単なる自動化を超え、認知(Cognition)・思考(Thinking)・行動(Action)を担う「完全なエージェント」へ進化する上で、Superb AIの技術が不可欠だからです。 NVIDIAエコシステムにおいて、Superb AIが担う技術的役割は次のとおりです。 3.1 完全な認知:3D空間知能とMTMC ロボットが現実世界で作業を遂行するには、自身がいる空間の構造と、扱うべき対象物の両方を、三次元で立体的に理解する必要があります。Superb AIは高価な3Dセンサーに依存せず、一般的な2Dカメラ映像だけで、この2つの次元における3D情報を正確に抽出します。 3D空間知能と3D復元(デジタルツイン): MTMC(Multi-Target Multi-Camera Tracking): 3.2 行動する知性:文脈理解にもとづくエージェンティックAI フィジカルAIが現場で人のように働くには、単に物体を認識するだけでは不十分です。状況の文脈を理解し、複合的な情報を統合して、最適な判断を下せる必要があります。 意図を即座に理解するビジョン・ファウンデーションモデル「ZERO」: 複合推論と制御を担うエージェンティックAI: 3.3 映像データの価値最大化:映像検索・要約(VSS) 従来のVMSは「直近90日」など一定期間の映像を無造作に保存するだけで、いざ必要な事案が起きると、数千時間分の録画を手作業で見返さなければならない非効率がありました。Superb AIの技術はこのプロセスを2段階で刷新し、映像データの活用性を最大化します。 映像の説明・要約を蓄積: 自然言語による高精度検索: 4. Superb AIとNVIDIAの協業:技術シナジーの最大化 NVIDIAが強力なハードウェア(GPU)と汎用AIソフトウェア基盤を提供する「インフラ企業」だとすれば、Superb AIはそれを土台に、B2B顧客がすぐに導入できる「応用ソリューション」としてパッケージ化して提供する中核パートナーです。NVIDIAの先端技術を産業現場に適用するには高い技術的ハードルがありますが、Superb AIがそのギャップを埋め、相互補完的なシナジーを生み出しています。NVIDIAの4つの主要技術スタックをSuperb Platformに深く統合し、次の価値を提供します。 4.1 NVIDIA Isaac Sim:仮想空間で完成させる「現実」のデータ 現実世界でロボット学習用データを収集するのは高コストであるうえ、火災や衝突などの危険シナリオを再現しなければならない制約もあります。Superb AIは エッジケースの克服: データ・フライホイール(Data Flywheel): 4.2 NVIDIA Cosmos:物理法則を理解するロボットの「頭脳」 NVIDIA Cosmosは単一モデルではなく、用途ごとに細分化されたファウンデーションモデル群です。物理世界のダイナミクスを理解するCosmos WFM(World Foundation Model)、複雑な状況の因果関係を推論するCosmos Reasonなど、領域特化モデルが用意されています。Superb AIはこれらを顧客の実ビジネス環境に合わせて最適化します。 特化モデルを適材適所で活用: データ選別とMLOpsによる効率的ファインチューニング: 4.3 NVIDIA MetropolisとMTMC:途切れない追跡とスケーラビリティ NVIDIA Metropolis エッジ・ツー・クラウドの柔軟性: 都市規模の広域追跡: 4.4 NVIDIA VSSブループリント:次世代映像検索の標準を実現 NVIDIAのVSS(Video Search and Summarization)ブループリントは、映像データをテキスト文書のように自由に検索・要約できる次世代AIアーキテクチャの標準を提示しました。Superb AIはこの方向性に沿った映像理解・検索ソリューションを実装し、製品として商用化しています。 文脈を理解するセマンティック検索: 即時インサイトの生成: 4.5 NVIDIAがつないだ製造革新:グローバルP社 ここまで述べた技術的シナジーは、研究段階にとどまりません。NVIDIAは自社技術を最も活用できるパートナーとしてSuperb AIを指名し、フォーチュン(Fortune)50のF&B企業である P社は生産性向上と非効率の解消に向け、現場へのAI導入を検討していました。これに対しSuperb AIは、 リアルタイム異常検知と追跡: 対話型データ分析: この事例は、Superb AIがNVIDIAの技術エコシステムを活用し、顧客へ実質的なビジネス価値を提供していることを示しています。 5. フィジカルAI:未来へ向かう確かな道のり CES 2026でJensen Huang氏が宣言した「ロボティクスのChatGPTモーメント」は、遠い未来のビジョンではなく、すでに始まった現実です。そしてその現実は、単一企業の技術ではなく、ハードウェア、AI、ソリューション企業が有機的に結びついた強固なエコシステムの上で形づくられています。 Superb AIはこの巨大なエコシステムの中で、ロボットに世界を見る「目(Vision)」と、状況を判断する「脳(Brain)」を提供する中核的役割を担っています。NVIDIAの強力なインフラ(Isaac Sim、Cosmos、Metropolis)と、Superb AIの実用的な応用技術(MTMC、VLM、VSS)の組み合わせは、前述のグローバルP社の事例のように、研究室の技術を産業現場の革新へと着実に定着させました。 製造現場の不良検出から都市の安全監視・管制、自律走行ロボットによるラストマイル配送に至るまで、フィジカルAIが必要とされるあらゆる領域で、Superb AIの技術が活用されています。 Superb AIは今後も、NVIDIAとの緊密な技術協力を通じて、物理世界の難題を解決し続けます。「最先端のAI技術を、最も導入しやすい形で届ける」という私たちの哲学は、複雑なフィジカルAI時代を航行する企業にとって、最も確かな羅針盤となるはずです。NVIDIAとSuperb AIがともに切り拓くフィジカルAIの時代、その革新の中心へ、皆さまを招待します。

Superb AI Japan

20 min read

注目の投稿

最近の投稿

事例研究

【導入事例】AIを活用した内視鏡腫瘍分析:ある主要ながん研究機関における成功事例

Superb AI Japan

5 min read

事例研究

【導入事例】造船業の人手不足を、スマートヤードとビジョンAIロボット自動化で突破

Superb AI Japan

5 min read

事例研究

【導入事例】Hanwha Foodtech、超精密ビジョンAIでピザ調理工程の自動化と品質管理を革新

Superb AI Japan

5 min read

お知らせ

Superb AI創立8周年 — 1.3億枚のデータ、韓国初のVision Foundation Model、そしてPhysical AIの次の章へ

Superb AI Japan

5 min read

インサイト

⑪ ドイツのフィジカルAI最前線:シーメンス、BMW、そしてロボット・ユニコーンの逆襲

Superb AI Japan

15 min read

インサイト

⑩ 米国ビッグテックのフィジカルAI動向(2):Tesla vs. Amazon 戦略分析

Superb AI Japan

10 min read