The world of machine learning is a data-hungry one. More data often equates to better models, especially in computer vision tasks. However, not all datasets are created equal. Sometimes, data can be scarce, particularly for certain cases that are less frequent or rare. This is where data augmentation techniques can significantly influence the model's performance.

A good understanding of how to approach and manage these challenges is key to building robust, reliable computer vision models. But how exactly can we navigate these complex waters? A part of the answer lies in tools like Superb Curate and its Auto-Curate features, which we've explored in detail in this article.

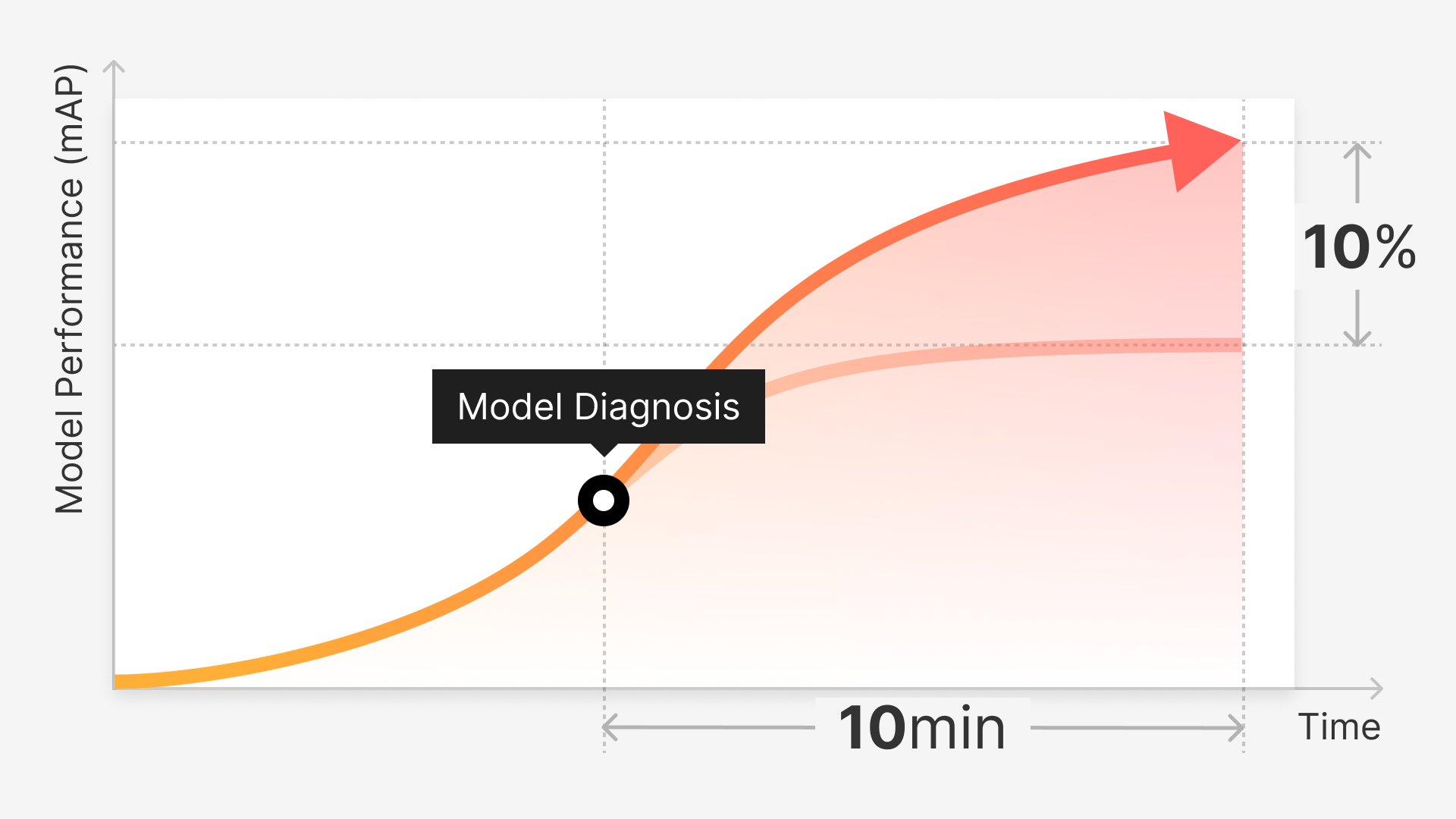

For those interested in diving deeper into the intricacies of model building, we also recommend reading our related articles. Our piece, “How Model Diagnosis Can Aid in Early Detection of Data Issues” uncovers how early and accurate identification of issues in your datasets can save time, improve efficiency, and ultimately lead to better predictive performance. Understanding these diagnostic techniques can be invaluable in preventing issues down the line, and ensuring that your models are trained on clean, high-quality data from the very beginning.

Additionally, our article, “Curating for Accuracy: Building Balanced Computer Vision Datasets,” dives into the practical strategies for assembling balanced datasets. This article offers a comprehensive guide on how to curate your data in a way that ensures representation, reduces bias, and improves the accuracy of your computer vision models.

We Will Cover

The challenges of imbalanced datasets

Superb Curate and tackling class and scenario imbalance

Resolving underlying data imbalances through augmentation

Conventional and advanced augmentation techniques

Case study examples

Conclusion

The Complexity of Collecting Data

Data is the lifeblood that powers computer vision systems. An adage often tossed around in this domain is "more data, better performance." While generally true, this statement is not without caveats.

Collecting a sheer volume of data is not a panacea, especially when dealing with imbalanced datasets. Moreover, the reality of data collection is often less straightforward than it might seem, particularly when the data needed is scarce or challenging to obtain.

When Rare Cases Occur

'Rare cases' or 'edge cases' represent scenarios or classes that occur less frequently in the dataset. Examples of these could be a rare disease in a medical dataset or a seldom-seen object in an image recognition dataset. In these instances, simply gathering more data might not be feasible due to the rarity of these cases or the difficulties associated with capturing these data points.

Dataset Imbalance and the Quest for Quality

Even if one were able to collect more data, it may not necessarily lead to a balanced dataset. Furthermore, merely augmenting the size of the dataset is not always a viable or effective solution.

The need for more data must also consider the quality and diversity of the data collected. For instance, if additional data collected is too similar to what already exists in the dataset, the model's ability to generalize could be hindered as it becomes too tailored to specific characteristics or patterns.

A Nuanced Approach to Imbalance

Therefore, a more nuanced approach is required when dealing with data imbalances, particularly for rare cases. This is where data augmentation comes in.

Rather than solely focusing on accumulating more data, data augmentation techniques enable us to enhance the existing data, generating a more diverse and balanced dataset. This approach can lead to better model performance, especially in situations where data collection can be challenging.

Facilitating the Creation of Balanced Datasets

The creation of effective and unbiased machine learning models can be quite a daunting task. It necessitates meticulous curation, labeling, and splitting of data, ensuring it's accurate, comprehensive, and well-organized. However, the sheer volume of data, its manual handling, and the associated costs make this task complex and time-consuming.

Superb's AI-powered solution, Curate, comes to the rescue in this scenario. It empowers ML teams to identify, label, and utilize the most significant data for any given situation. Curate addresses one of the most significant challenges in computer vision - separating valuable data from the rest, sidestepping selection bias and class imbalances, and distinguishing between 'bad' data and useful edge cases.

The Struggle of Scaling

While most teams are capable of visually identifying relevant data, the task of manually reviewing tens of thousands of data points is neither scalable nor feasible for most organizations. Also, this method doesn't offer a systematic way to prioritize data for labeling or guarantee a balanced distribution of samples with minimal redundancy.

The absence of systematic metadata design and collection during the data acquisition phase further complicates manual search and review. The inability to search unannotated data leads many teams to adopt a "more the merrier" approach, which often leads to diminishing returns in terms of model performance and data preparation costs.

Curate: Automating the Art of Curation

Curate solves this challenge by providing organizations with an easy way to search, manage, and visualize their data at scale. It automates the curation process, avoiding significant computational and infrastructure costs.

This automation enables ML teams to identify what data to label, create data distribution that mirrors real deployment data, generate balanced training and validation sets, and detect outliers and anomalies effortlessly.

By eliminating the inconsistent and costly human elements from data curation and management workflows, Curate helps organizations reduce the cost of their computer vision projects. This reduction in cost includes labeling, thereby boosting the return on investment.

Curate’s Auto-Curate

Superb Curate offers your team the ability to automatically curate, at the dataset or slice level, the most suitable dataset for your model requirements. This feature, dubbed Auto-Curate, considers data sparsity, label noise, class balance, and feature balance when categorizing and curating data for you.

Auto-Curate reduces the cost of curation and enables the creation of a high-performance model with a more accurate and well-curated dataset. Curate provides several automated curation methods:

What to label: Identifies the most crucial data points to label first.

Best for curating a balanced slice: Ensures your full dataset is well-represented.

Split train/validation set: Automatically splits your labeled dataset, ensuring unbiased model validation.

Edge case detection: Identifies sparsely located data points, ensuring robustness and performance in all scenarios.

Find mislabels: Identifies data points that are most likely mislabeled, ensuring a high-quality labeled dataset.

Augmentation: A Practical Scenario

To further understand data augmentation, consider a scenario in machine learning where we want to build a model to classify different dog breeds. Suppose the dataset contains an abundance of images for most breeds, but falls short when it comes to a specific breed, say pugs. As a result, the model's capacity to accurately classify pugs would be considerably compromised due to the dearth of available data.

In this situation, data augmentation can provide a solution. By adding more images of pugs - either real, synthetically generated, or by manipulating existing images (e.g., replicating and distorting them to create unique instances) - we can enhance the model's ability to classify this under-represented breed correctly.

As the dataset grows in diversity and volume through these augmentation processes, the machine learning algorithm's performance subsequently improves. The additional data can range from images to text, making data augmentation a versatile tool applicable across various data types and machine learning tasks.

But does data augmentation improve the accuracy of the model? In most cases, the answer is yes. Data augmentation techniques have consistently demonstrated their ability to enhance the model's accuracy. By reducing the propensity of models to overfit the training data, they improve the model's generalization capabilities, leading to better performance on unseen or new data.

Combating Limited and Imbalanced Data

Data augmentation holds particular value when dealing with rare or under-represented cases in datasets. This feature becomes a potent weapon against the problem of limited or imbalanced data, ultimately resulting in models that can deliver more accurate and reliable results, even in challenging scenarios.

Data Augmentation Challenges

In spite of the proven benefits of data augmentation in machine learning, there exist certain challenges that pose significant hurdles, especially when addressing class or scenario imbalance in datasets. These issues can often not be solved merely by collecting more data.

Difficulty in Preserving Original Information: The risk of altering or losing vital information is ever-present during the augmentation process. For example, excessive rotation or scaling of an image can make it unrecognizable or irrelevant for the task at hand.

Creating Unnatural Data Instances: Data augmentation methods could potentially generate instances that are not representative of the actual category or class, leading to unrealistic results.

Resolving Underlying Data Imbalances: Simple augmentation techniques may not suffice to address severe imbalances in the original dataset. More complex techniques such as GANs or synthetic data generation may be required, which come with their own set of challenges.

Overfitting to augmented data: There's a risk that the model may overfit to the types of augmentations applied, limiting its ability to generalize to unseen data.

Computational cost: Advanced augmentation techniques like GANs and style transfer are computationally intensive and require substantial resources and time.

Managing bias: The augmentation process can inadvertently introduce or exacerbate biases present in the original data, which might be particularly detrimental in cases of imbalance.

Real-World Examples and Use Cases

Data augmentation has proven to be a game-changer across a range of sectors, playing a pivotal role in enhancing the performance of machine learning models.

This is especially true when the data is scarce, unbalanced, or replete with rare instances. Industry-specific case studies underscore the widespread influence and significance of data augmentation.

Healthcare: Disease Diagnosis

The healthcare industry often grapples with the scarcity of data, particularly when it comes to rare diseases like Alkaptonuria. A team of researchers sought to enhance early detection of this genetic disease through a deep learning model. However, limited images related to the disease complicated the training process.

To address this, they employed data augmentation techniques, including rotation, flipping, and zooming on the available images, to create more data for the model to learn from. Beyond these strategies, they also contemplated using tools like Superb Curate's Auto-Curate feature, which provides a robust and automated method to curate data according to specific needs, on the entire dataset or on customized slices of data.

In the context of diagnosing Alkaptonuria, Auto-Curate's 'Curate What to Label' option could guide researchers to prioritize which unlabeled data should be labeled first, based on factors like data sparsity, label noise, class balance, and feature balance. Post labeling, the 'Split Train/Validation Set' option can help split the dataset into training and validation sets, improving the model's generalization performance, and avoiding overfitting.

The 'Find Edge Cases' option could help identify rare instances of the disease in the dataset, thus improving the model's accuracy and diversity. Additionally, 'Find Mislabels' can be used to identify and rectify labeling errors that could impact the reliability of the model.

Automotive: Self-Driving Cars

In the automotive industry, companies like Waymo utilize a method called "rare scenario simulation" to create numerous variations of edge-case driving scenarios. This method, while effective, could be further enhanced with tools like Superb Curate's Auto-Curate feature.

Auto-Curate can help in creating well-balanced training and validation sets, which is crucial in improving the model's generalization capabilities and avoiding overfitting. It also helps in identifying and incorporating edge cases into the training dataset, which can drastically improve model accuracy.

Retail: Image Recognition for Product Categorization

In retail, machine learning is extensively used for tasks such as product categorization based on images. However, certain product categories might be underrepresented in the dataset, leading to inaccurate categorization.

eBay tackled this challenge by using Generative Adversarial Networks (GANs) to create synthetic images of underrepresented product categories. While GANs have been beneficial, incorporating tools like Superb Curate's Auto-Curate feature could further improve the categorization accuracy. Auto-Curate's 'Curate What to Label' option could help identify high-value data, including underrepresented product categories, ensuring a more balanced dataset.

The 'Find Edge Cases' feature could be used to identify rare product categories, improving model accuracy and diversity. Furthermore, potential labeling errors can be identified and corrected with the 'Find Mislabels' feature, thereby further enhancing the reliability and performance of the model."

Conventional Data Augmentation Techniques

To overcome limitations in data quantity and quality, various data augmentation techniques can be applied. These techniques enhance the diversity of the data, allowing the model to learn from a variety of scenarios. Here, we detail common data augmentation techniques for different types of data, including images, text, and video.

Image Data Augmentation

Image data augmentation is a standard procedure in machine learning. By creating variations of the available data, the model is exposed to different perspectives, thereby improving its ability to generalize. The following are some common techniques:

1. Geometric transformations:

Flipping, rotating, scaling, and cropping are standard techniques used to increase data diversity. They offer the advantage of maintaining the object's essential features while altering its appearance slightly.

2. Photometric transformations:

Adjusting brightness, contrast, and saturation can also significantly enhance data diversity. They are especially useful in preparing a model for different lighting conditions.

3. Noise injection:

Adding artificial noise to the image can help a model become more resilient to imperfect real-world data.

Text Data Augmentation

Text data augmentation aims to alter the textual context without changing its semantics, thus increasing the model's understanding and reducing overfitting. Some of the popular techniques are:

Synonym Replacement: This technique involves replacing words in the text with their synonyms, maintaining the sentence's overall meaning.

Random Insertion: A random word that fits the sentence context is inserted at a random position.

Random Deletion: Randomly removing words from the sentences.

Back Translation: The text is translated into a different language and then translated back to the original language, often leading to slight changes in sentence structure.

Video Data Augmentation

Video data augmentation is used to enhance the variety in video datasets. Some of the commonly used methods are:

Temporal Transposition: The video frames are rearranged in a different order to create diversity.

Frame Removal: Some frames are randomly removed to create different sequences.

Speed Alteration: The playback speed of the video is altered, either sped up or slowed down.

Spatial Transformations: Similar to images, geometric and photometric transformations can be applied to video data.

Advanced Techniques for Data Augmentation

While the above techniques are widely used and generally effective, they might be insufficient for certain exceptional cases. Hence, more advanced techniques such as Generative Adversarial Networks (GANs), meta-learning, neural-style transfer, and reinforcement learning have been developed to address such challenging scenarios.

1. Generative Adversarial Networks (GANs):

GANs can generate new data instances that are almost indistinguishable from the real ones. For example, GANs can be trained to create more images of rare classes, thereby overcoming the issue of rarity.

Additionally, GANs can enhance the effectiveness of Convolutional Neural Networks (CNNs) by generating new samples for the training dataset, potentially outperforming traditional data augmentation techniques.

Meta-learning, or "learning to learn," is a subfield of machine learning where algorithms learn from other machine learning algorithms. In the deep learning domain, it refers to the optimization of neural networks via other neural networks. Meta-learning can be used to create high-level elements for training neural networks, offering a unique advantage for data augmentation.

3. Neutral Style Transfer

This technique leverages deep learning to apply the style of one image (like an artistic style) onto another. This allows for the creation of new data instances with the same core features but different styles. Neural style transfer-based augmentation does come with challenges like deciding the style, slow running times, and high storage and memory capacity needs.

4. Reinforcement Learning

Reinforcement learning (RL) can be enhanced with augmented data to ensure that agents are prepared to perform in a broad range of scenarios.

The RL based augmentation involves training an agent to make decisions in an environment to maximize some notion of cumulative reward. By providing a variety of scenarios through data augmentation, we can make our RL agents more robust and capable of handling new unseen scenarios.

Automating Augmentation for Data Management

The quality, diversity, and balance of datasets is a crucial determinant of a model's performance. The inherent challenges of collecting vast quantities of diverse data, especially for rare or infrequent cases, necessitates the use of innovative strategies like data augmentation. This technique improves the model's ability to learn by enhancing existing datasets, creating a broader range of scenarios for the model to train on.

However, data augmentation is not without its challenges, including the risk of altering vital information, generating non-representative data instances, managing underlying data imbalances, preventing overfitting to augmented data, and dealing with computational costs and potential biases.

Superb's AI-powered solution, Curate, plays an essential role in managing these challenges by offering a platform that automates the curation process, thus allowing organizations to manage their data effectively and efficiently. Through techniques like Auto-Curate, Curate helps to identify and label significant data points, create a balanced dataset, and detect outliers and anomalies.

Ultimately, the success of machine learning projects hinges on the careful application of these techniques and tools, to ensure that models are trained on high-quality, diverse, and balanced data, enabling them to deliver accurate and reliable results in real-world applications.